Voice AI Agents for Customer Call Centers with Human-Like Voices

Call centers are undergoing a paradigm shift with the advent of Voice AI agents – automated virtual agents that converse with customers by voice in real time. These AI-driven agents leverage advanced speech technologies to sound remarkably human, moving beyond the monotonic interactive voice response (IVR) systems of the past. In 2025, nearly 80% of customer experience (CX) leaders believe voice AI is ushering in a new era of seamless problem-solving, finally leaving robotic IVRs behind. This transformation is driven by rapid improvements in artificial intelligence: modern voice agents can understand natural speech, interpret intent, and respond with lifelike intonation. Crucially, they do so without the cost constraints of 24/7 human staffing.

For call center managers and business leaders, voice AI promises to reduce costs, eliminate wait times, and improve customer satisfaction. Early adopters have seen concrete gains – from 50% reduction in call wait times at a credit union to the ability to handle surges in call volume without hiring extra staff. AI researchers are equally excited, as large language models (LLMs) and neural speech synthesis are enabling more natural and context-aware conversations than ever before. Meanwhile, customers are warming up to the idea: 65% say voice AI improves phone interactions, noting it can even make it easier to explain and resolve complex issues. In this post, we’ll explore how these voice AI agents work, their benefits and challenges, real-world case studies, future innovations on the horizon, and the ethical/regulatory landscape shaping their deployment.

How Voice AI Agents Work

Voice AI agents are powered by a pipeline of AI technologies that allow them to hear, comprehend, and speak in a human-like manner. At a high level, the process involves:

- Automatic Speech Recognition (ASR) – When a customer speaks, ASR engines convert the spoken audio into text. Modern ASR models, often based on deep learning, can handle natural speech, different accents, and noisy backgrounds with high accuracy. This is the crucial first step that transcribes the customer’s voice into machine-readable words.

- Natural Language Processing (NLP) and Understanding – The transcribed text is then processed by NLP algorithms (and increasingly by large language models) to interpret the meaning and intent behind the customer’s words. This involves Natural Language Understanding (NLU) to parse the request (e.g. identifying that a caller saying “I want to check my balance” is asking for an account balance). Advanced voice AI systems leverage conversational AI frameworks powered by LLMs that understand context and nuance far better than legacy systems. Unlike basic keyword spotting, these AI agents maintain conversational state and can handle complex, multi-turn dialogues. They may integrate with backend databases or customer relationship management (CRM) systems to fetch relevant information (for instance, retrieving a customer’s account details to answer a query).

- Dialogue Management and Response Generation – Once the intent is understood, the agent decides how to respond. This could be via a predetermined script for common requests or dynamically generated by an AI model. Large Language Models (LLMs) (like GPT-based models) can be used here to craft more natural and contextually appropriate responses. These models enable the AI agent to handle a wide variety of queries and even unexpected inputs by drawing on extensive training data. They can also inject courtesy and empathy phrases, making the interaction feel more human. Importantly, the system can be designed to follow business rules – for example, escalating to a human agent if the conversation becomes too complex or if the AI detects the customer is dissatisfied.

- Text-to-Speech (TTS) with Human-Like Voice Synthesis – After generating a response in text form, the AI agent uses TTS technology to speak back to the customer in a natural voice. Modern TTS systems based on neural networks (such as WaveNet and other neural vocoders) produce speech nearly indistinguishable from a human’s tone and inflection. They can incorporate appropriate pauses, intonation, and even emotional tone to some extent, resulting in a conversational cadence that makes interactions feel genuine. Today’s voice AI agents often use pre-designed “personas” with specific voice characteristics (gender, accent, style) that align with the company’s brand image. Some advanced setups even allow cloning a voice from a human voice actor to create a custom AI voice for the call center.

Behind the scenes, all these components work in concert in real-time. When the customer speaks, the ASR–NLP pipeline swiftly interprets the utterance, the dialogue manager (potentially an LLM) decides the best answer, and the TTS engine vocalizes the reply – all within a second or two to keep the conversation flowing naturally. This end-to-end flow is often managed on cloud-based platforms optimized for telephony. For example, Twilio and other cloud communications providers offer integrated stacks where incoming calls are fed through ASR, then an AI logic (which could be a Python function or an LLM API call) generates a reply that TTS turns into audio on the call. The result: The customer experiences talking to what feels like a knowledgeable, polite human agent, when in fact it’s an AI orchestrating a host of machine learning services in real time.

Context and Memory: Another key aspect of how voice AI agents work is maintaining context. Unlike old IVRs that treat each input independently, AI agents keep track of the conversation history. If a customer first provides an account number and later just says “What’s my balance?”, the AI remembers the account number from earlier in the call. This context retention is greatly improved by transformer-based language models that can handle longer dialogues. Additionally, integration with CRM means the AI can recall past interactions – so the agent could say “Welcome back, I see you called yesterday about a credit card issue; are you following up on that?” Such personalization was historically hard to achieve at scale, but AI makes it feasible.

Multilingual capability: With the right models, a voice AI agent can support multiple languages within the same system. The ASR and TTS would switch to the target language, and the NLP engine (or LLM) would interpret and generate in that language. This means a single AI agent could converse fluently in English, Spanish, Tagalog, etc., which is a big advantage in serving diverse customer bases. In fact, voice AI allows companies to offer support in a customer’s preferred language on demand, something very costly with human staff.

In summary, voice AI agents are an amalgam of speech recognition, natural language understanding, and speech synthesis, often supercharged by large language models that give them surprising flexibility and fluency. The technology has matured to the point that these agents can handle many of the same tasks human call center representatives do – from answering questions to troubleshooting common issues – by leveraging vast computational intelligence behind a friendly voice interface.

Benefits of Voice AI Agents for Call Centers

Implementing voice AI agents in a call center can yield a wide range of benefits, from operational cost savings to improved customer experience. Below we break down the key advantages:

- Significant Cost Reduction: Perhaps the most compelling benefit for businesses is the potential to dramatically lower the cost of customer service. AI agents handle routine inquiries at a fraction of the cost of human agents – there’s no salary, benefits, or physical infrastructure for desks and office space. Companies using voice AI have reported average cost reductions of 25–40% in their call centers while maintaining or improving service quality. Gartner projects that by 2026, conversational AI will cut contact center labor costs by $80 billion annually. To illustrate, the average cost-per-call with a human agent is around $2.70–$5.60, whereas an AI-powered call might only incur a few cents of cloud processing fees. Voice AI can automate 25–50% or more of calls, directly reducing staffing needs or allowing existing staff to handle more complex tasks. In practice, this has translated into up to 50% lower operational expenses in some deployments. And beyond direct savings, every call an AI handles is one that doesn’t contribute to agent overtime or require hiring temp staff for peak seasons.

- 24/7 Availability and Instant Response: Unlike human teams bound by work shifts, AI voice agents provide round-the-clock service. They are available 24/7/365 without fatigue, ensuring customers can always reach help anytime – late nights, weekends, holidays. This always-on availability improves customer satisfaction for those who need after-hours support and expands service coverage across time zones. Moreover, AI agents answer immediately – there’s no waiting for the “next available representative.” This instant response aligns with modern customer expectations: studies show 90% of consumers want immediate answers (within 10 minutes or less) for support questions. With voice AI, call queues can essentially become a thing of the past, since the system can welcome and start assisting each caller right away. This dramatically cuts down wait times and call abandonment rates. (In fact, nearly one-third of callers will hang up if kept waiting too long, so eliminating holds directly saves those potentially lost customers.)

- Scalability and Peak Handling: Voice AI agents scale effortlessly to meet surges in call volume. If your business sees an unexpected spike – say during a product launch or an outage – AI agents can ramp up to handle thousands of simultaneous calls without degradation in service quality. There’s no need to frantically call in extra staff or have customers suffer long waits. This elasticity means no more being understaffed at peak times and also no wasted labor during slow periods – the AI capacity flexes as needed. The end result is consistent service even during high demand. A traditional call center might be overwhelmed by a 2× increase in calls, but an AI-powered system can maintain normal operations (and customers wouldn’t even know a spike occurred). This scalability also enables businesses to handle growth or seasonal fluctuations without proportional increases in headcount.

- Improved Consistency and Accuracy: Human performance can vary – agents have good days and bad days, and their knowledge levels differ. In contrast, a voice AI agent delivers consistent service on every call. It never forgets to mention an upsell, never loses patience, and strictly adheres to compliance scripts. The AI will follow the best-practice flow every single time, ensuring a uniform quality of service. This consistency reduces errors (no “oops, I gave the wrong information” scenarios) and can improve metrics like First Call Resolution since the AI uses the correct process each time. Additionally, AI agents have “perfect memory” within a call and across calls if integrated with a knowledge base – they won’t give conflicting answers to different customers. From a branding perspective, this uniformity helps establish a reliable customer experience where callers know exactly what to expect in terms of professionalism and correctness.

- Faster Resolution and Shorter Handle Times: Voice AI can often resolve issues faster than a human, especially for straightforward inquiries. There’s no need to put a customer on hold to look up information – the AI can query databases in milliseconds. Simple requests like “What’s my order status?” or “I need to reset my password.” can be handled in a fully automated flow that might take a fraction of the time of a live agent interaction. Even when the AI doesn’t fully resolve the call, it can gather information upfront (account numbers, verification, issue details) and then hand off to a human agent with a summary, so the overall handle time is reduced. In many cases, common queries that might tie up a human for 5-7 minutes can be answered by AI in 2-3 minutes, boosting efficiency. For example, WEOKIE Credit Union’s voice AI assistant was able to answer routine banking questions and perform balance lookups, which freed up humans and led to noticeably quicker resolutions for those routine calls.

- Enhanced Customer Experience (CX): Counterintuitively, automating calls can improve the customer experience when done well. Customers hate waiting on hold and navigating complex IVR menus – a voice AI agent eliminates those pain points by engaging immediately in natural language. The frustration of repeating information is also reduced; for instance, if a call does transfer to a human, all the info collected by the AI (name, issue, troubleshooting done) can be passed along, so the customer doesn’t have to start over. According to surveys, 65% of customers say voice AI has made their phone interactions better, partly because many find it easier to talk freely to an AI about an issue without feeling judged or rushed. Also, voice AI is infinitely patient and courteous – it will never show annoyance no matter how upset or talkative a customer is. This can lead to a calmer interaction, which is especially valuable with angry callers. When well-implemented, voice AI can actually humanize the experience by personalizing responses and showing active listening behaviors (acknowledging the caller’s statements, using empathetic phrases, etc.), all programmed into its dialogue. And for tech-savvy customers, there’s a novelty and convenience factor in dealing with a competent AI that might even boost satisfaction (as long as it works smoothly!).

- Higher Agent Productivity & Morale: From an operations view, introducing AI agents means human agents are no longer bogged down with mundane, repetitive calls – those are offloaded to AI. Human representatives can focus on higher-value interactions, complex problem-solving, or sales opportunities that truly require a human touch. This shift can improve agent job satisfaction; instead of answering “What’s my balance?” 50 times a day, agents tackle more engaging challenges. It also reduces burnout and stress on agents, who previously had to handle high volumes and irate wait-weary customers. In the WEOKIE example, after the voice AI took routine inquiries, human agents could dedicate their time to complex financial issues, making their work more meaningful and less frantic. Happier agents often translate to better service on the calls they do handle. Additionally, a hybrid AI-human model can enhance productivity by effectively creating “ AI co-workers” for each agent – one agent might supervise AI handling many calls, stepping in only when needed, thus multiplying the capacity of each agent.

- Data and Analytics Benefits: Every interaction handled by a voice AI is automatically transcribed and analyzable. This opens up rich possibilities to gain insights from calls at scale. AI systems can perform real-time sentiment analysis, identifying if a customer is upset or pleased based on their tone. They can flag trending issues (e.g., multiple callers asking about a new bug after a software update) far faster than manual monitoring. Conversational analytics can aggregate the reasons people call, the phrases they use, and the outcomes, feeding valuable feedback to product and marketing teams. Traditional call centers typically record calls and randomly sample them for QA – maybe 2% of calls get analyzed. In contrast, an AI can analyze 100% of calls, providing dashboards on customer pain points, common complaints, and even detecting compliance issues (like if an agent, human or AI, failed to give a required disclosure). This treasure trove of data helps continuously improve service. Some advanced voice AI solutions even auto-summarize each call’s key points and outcome, saving agents from writing call notes and ensuring accurate records.

- Personalization at Scale: By integrating with customer data, voice AI agents can personalize interactions in ways human agents might not consistently do. The AI can instantly pull up the caller’s purchase history, preferences, and past interactions to tailor its responses. It might say, “Hi John, I see you recently bought a laptop. Are you calling about that order?” or use the customer’s name naturally in conversation, which builds rapport. 71% of customers expect personalized service, and AI can meet this expectation by leveraging databases in real-time. This level of personalization – remembering details from last calls, knowing the customer’s loyalty status, etc. – at scale is something unique to AI, since training every human agent to do the same (and ensuring they always do) is nearly impossible. Done right, it makes the customer feel recognized and valued every time they call.

In aggregate, these benefits mean voice AI agents can transform a call center from a cost center into a competitive advantage. Companies can handle more customers, more efficiently, and with more consistency. Customers get faster, round-the-clock service without the headaches of hold music and transfers. And employees move into more satisfying roles overseeing the AI or focusing on tough cases. It’s a win-win, which is why 76% of contact centers plan to expand AI and automation in their operations.

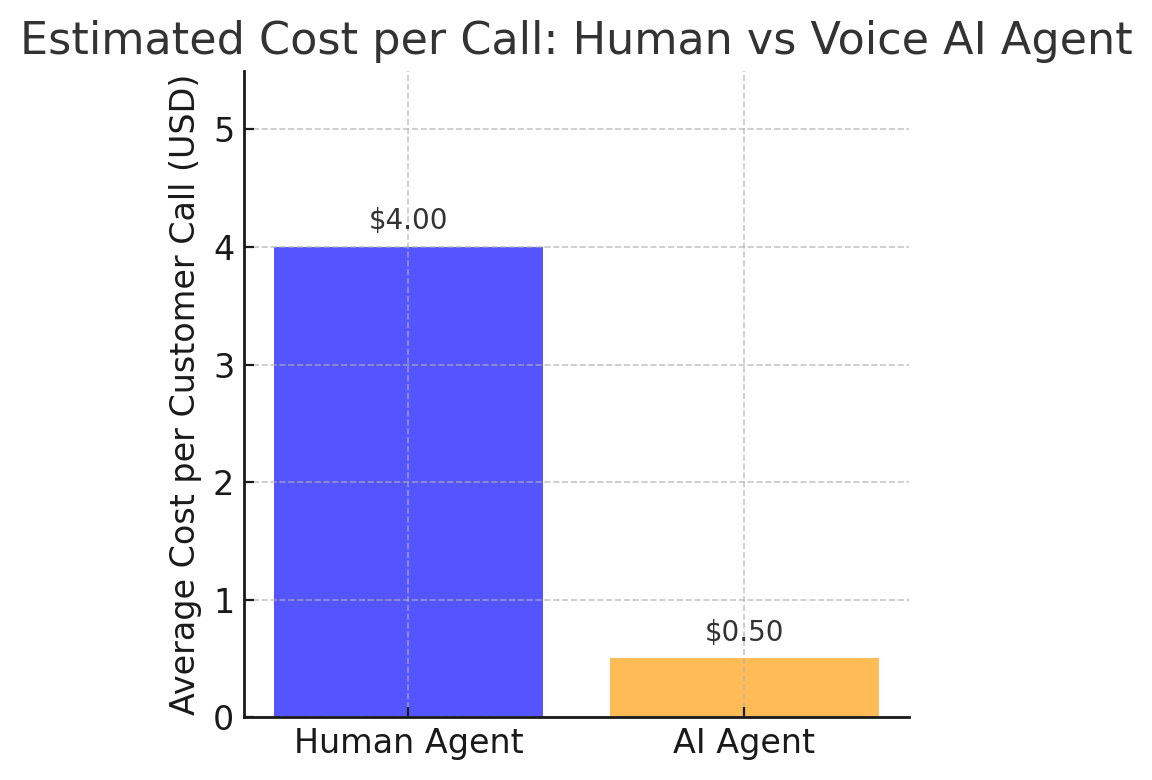

To visualize one of the key advantages, consider the cost per call when using AI agents versus traditional human agents:

Estimated cost per customer call with a human agent versus a voice AI agent. On average, a human-handled call costs a few dollars while an AI-handled call costs only a few cents in cloud processing, reflecting up to 80–90% cost reduction.

As shown above, the economics are compelling – AI calls are orders of magnitude cheaper. One analysis found automating call answering and routing can cut staffing costs by up to 90%. Of course, actual savings vary by use case and call complexity, but many organizations are already seeing substantial ROI. For example, conversational AI in contact centers is expected to save businesses $80 billion in labor costs by 2026, and some companies report 30–50% expense reductions after deploying voice AI. These savings can be reinvested into better training for remaining staff, improved products, or simply passed along as lower costs.

Real-World Examples and Case Studies

Voice AI agents are not just theoretical – a number of organizations across industries have implemented them with promising results. Let’s look at a few real-world examples and case studies showcasing voice AI in action:

- WEOKIE Credit Union – 50% Reduction in Wait Times: WEOKIE, a financial institution in Oklahoma, deployed a voice AI agent (provided by interface.ai) to handle routine member inquiries in its call center. The AI assistant could answer questions about account balances, loan payments, branch hours, and other common transactions. The impact was immediate: call wait times dropped by 50% as the AI picked up a significant portion of incoming calls, freeing human agents. Members no longer had to endure long holds for simple requests, which improved customer satisfaction scores. Human agents, in turn, became more productive – they could focus on complex, high-value calls without being constantly interrupted by basic queries. WEOKIE also highlighted the benefit of 24/7 service: the AI handles calls after hours, so members get help at their convenience, something not possible before. Overall, the credit union achieved better service quality and efficiency simultaneously, illustrating the classic “do more with less” promise of AI. This success story (cutting wait times in half) garnered attention as a blueprint for other banks and credit unions on leveraging voice AI to modernize customer service.

- Multiple Credit Unions via Eltropy – 30%+ Call Automation: Eltropy, a fintech provider, reported that over 25 community banks and credit unions have gone live with AI voice and chat solutions, automating a large chunk of their customer interactions. These financial institutions replaced their old IVR systems with conversational AI voice agents, and collectively they are automating about 1 out of every 3–4 phone calls that come in. That’s roughly 25%–33% of calls being handled fully by AI, with no human intervention, across a consortium of credit unions. The AI voice assistants integrate with core banking systems and existing telephony (like Genesys, Cisco, Five9, etc.), demonstrating that integration is feasible with legacy systems. As a result, these institutions have seen improvements in key metrics: shorter voice and chat wait times, lower average handle time (AHT), higher first-call resolution (FCR), and increased Net Promoter Scores (NPS) after implementing AI. This case shows that even smaller organizations (not just big banks) are adopting voice AI and seeing tangible improvements in customer service performance.

- Bank of America’s “Erica” Voice Assistant – 1 Billion Interactions: Bank of America has a virtual assistant named Erica, which customers can interact with via voice or text primarily through the bank’s mobile app. By 2022, Erica had facilitated over 1 billion interactions with customers. While not a call center agent per se (Erica lives in the app), this example is worth noting because it underscores consumers’ appetite for voice-based assistance in financial services. Erica can handle tasks like bill reminders, balance queries, simple transactions, and even provide credit report updates. Its wide adoption shows that a well-designed voice assistant can achieve massive scale and successfully deflect inquiries away from call center lines. Many banks and insurers have since looked to emulate this model for their contact centers, embedding similar voice AI capabilities into their phone support and IVR systems.

- Klarna’s AI Customer Service (Chat/Voice): Klarna, a global payments and shopping service, launched an AI customer service tool (based on a large language model) which, as of late 2023, was handling two-thirds of all customer inquiries via chat without human involvement. While Klarna’s example is predominantly about text chat, the underlying AI is language-agnostic and could be applied to voice calls as well. Klarna’s assistant has dramatically scaled their support – imagine having an “AI workforce” that handles 66% of support volume. This shows the trend of companies trusting AI for customer-facing roles. Expanding such capability to voice calls is a natural next step, and indeed many of the chats the AI handles could just as easily be phone calls in another context. The success here points to the viability of AI agents across channels.

- Outbound Voice AI (Google Duplex and Others): On the outbound side, voice AI made waves a few years ago when Google demonstrated Duplex, an AI that could call restaurants to book reservations while sounding extremely human. Duplex showed that AI can navigate voice conversations initiated by the AI itself. Some enterprises are now using similar technology for things like appointment reminders or surveys. For instance, healthcare providers can have an AI voice agent call patients to remind them of appointments or ask them post-visit satisfaction questions – tasks that used to require call center staff. Early adopters in hospitality and retail have trialed AI voices for outbound confirmation calls, often with customers not realizing they weren’t speaking to a human. This area is still emerging, but it hints that voice AI agents will not only receive calls but also make calls on behalf of humans for various service interactions.

- Leading Contact Center Platforms Adopting Voice AI: The major contact center technology providers (e.g., Amazon Connect, Cisco, Avaya, Five9, NICE inContact, Genesys) have all integrated AI voice capabilities. For example, Amazon Connect offers Amazon Lex (the tech behind Alexa) to build conversational voice IVRs, and Google’s Contact Center AI integrates Dialogflow for voice bots. Companies like Twilio also provide building blocks for bespoke voice AI agents. This wide availability means many companies have quietly implemented AI-driven call flows. One notable instance is Qualcomm’s call center using voice AI to transcribe and summarize calls, cutting after-call work time in half for agents – an indirect use where the AI assists humans, but the same underlying tech could converse directly with customers. As these platforms continue to push AI features, expect to see more case studies where a significant percentage of calls are handled by AI across industries such as travel (e.g., airlines automating flight info calls), telecom (troubleshooting via AI), and government services.

Overall, these examples underscore that voice AI agents are delivering real value today. They’re reducing wait times, taking over repetitive queries, and even handling complex dialogues in multiple languages. Importantly, they’re doing so while keeping customers satisfied. Some customers initially express surprise or hesitation talking to an AI, but as the Zendesk research noted, many customers find explaining their problem to an AI as easy or even easier in some cases. And early data from deployments suggests that some customers actually prefer speaking with an AI for routine issues – one reason being “imagine always getting the same service agent with perfect memory of your history”. In other words, a well-designed voice AI can offer consistency and knowledge that even humans struggle with, which certain callers appreciate.

For call center managers, these case studies highlight that voice AI is not science fiction; it’s a competitive tool being used now. Those who have embraced it are seeing measurable improvements in efficiency and customer feedback. As more success stories emerge, it creates a snowball effect prompting wider adoption.

Challenges and Limitations

While the benefits are compelling, it’s important to acknowledge that voice AI agents also come with significant challenges and limitations. Implementing an AI agent is not as simple as flipping a switch – it requires careful planning to navigate technical hurdles, and even the best systems have constraints. Here are the key challenges and current limitations:

- Handling Complex or Unusual Queries: Despite advances, AI voice agents can struggle with scenarios that deviate from what they were trained on. They excel at routine, bounded tasks (like answering FAQs or doing basic transactions), but if a customer’s issue is highly complex or unique, the AI may get confused or provide unsatisfactory answers. Open-ended problem solving and nuanced judgment calls remain difficult for AI. For example, if during a tech support call an AI has never encountered the specific combination of error messages the user describes, it might flounder or give a generic answer. Large Language Models have broadened the range of queries AIs can handle, but they can also “hallucinate” incorrect answers if asked something beyond their knowledge domain. Businesses must design clear escalation paths so that when the AI hits its limits, it seamlessly transfers the call to a human agent who can take over. Not doing so risks frustrating customers with an AI that goes in circles. In essence, AI handles the known knowns; humans are still needed for the unknowns or very complicated cases.

- Accents, Dialects, and Language Coverage: Understanding everyone is a tall order. Heavy accents, uncommon dialects, or non-native speakers can trip up ASR systems, leading the AI to misinterpret the customer’s words. Language and accent recognition issues remain a challenge – for instance, an AI might struggle to understand a caller with a strong regional accent or mixing languages (code-switching). This can cause incorrect responses or the agent asking the customer to repeat themselves frequently, hurting the experience. Similarly, not all languages are equally supported by commercial AI platforms; a company might find excellent English support but mediocre support for, say, Arabic or Vietnamese. Training data bias plays a role – if the ASR/NLP wasn’t trained on a particular accent or language nuance, accuracy drops. Tackling this requires investing in more diverse training data and possibly customizing the AI for the target audience’s speech patterns. Some companies address it by having separate voice models for different regions (e.g., one tuned for UK English vs. US English). The tech is improving, but in a multilingual, global customer base, ensuring the AI understands everyone well is an ongoing effort. Until then, certain calls may need to route to human agents if the AI confidence in understanding is low.

- Limited Emotional Intelligence and Empathy: Today’s AI, even with advanced NLP, cannot truly understand emotion or context the way humans do. They can be programmed to recognize certain keywords or a change in tone (some systems do real-time sentiment analysis to detect if a customer’s voice sounds angry or upset). However, authentic empathy – the ability to really feel what the customer is feeling and adjust accordingly – is not fully attainable with AI yet. AI can simulate empathy by saying things like “I’m sorry you’re having this issue, I understand how frustrating that is,” but it’s fundamentally following a script. In delicate situations – say a customer calls to report a fraud on their account and is very anxious – a human agent’s ability to spontaneously show compassion and flexibility is superior. Customers might perceive an AI’s responses as hollow or not quite grasping the gravity of their concerns if the situation is emotionally charged. Additionally, AIs can’t yet adapt to different communication styles and personalities as fluidly. A skilled human might detect a caller is confused and proactively rephrase explanation in simpler terms, or use humor to lighten the mood if appropriate – subtle human skills that AI lacks. This “human element” gap means that for now, certain calls (particularly those involving upset customers or sensitive topics) are best served with human empathy. It also means companies deploying voice AI should be cautious about over-automating – they must identify scenarios where empathy is key and ensure those are routed to people.

- Technical Difficulties and Glitches: Voice AI systems rely on multiple components and a lot of software – things can and will go wrong at times. ASR mis-transcriptions, NLP parsing errors, or TTS mispronunciations can all occur. There may be cases where the AI simply fails to understand a simple request due to a glitch, or worse, the call could drop if the system crashes. In a cold-call sales environment, for instance, if the AI malfunctions mid-conversation, it not only loses a potential customer, but also creates a negative impression. Ensuring reliability at scale is a major challenge: it requires robust infrastructure and extensive testing. Even then, background noise or unexpected speech patterns might throw it off. Additionally, latency is a concern – if the AI takes too long to process and there are awkward silences, customers will get confused or think the call disconnected. Achieving human-level conversational speed and turn-taking can be hard, especially if the AI is accessing external databases during the call. Businesses must invest in resilient, low-latency systems and have fallbacks (e.g., “I’m having trouble understanding – let me transfer you to an agent”) when things fail. Regular monitoring of the AI interactions is needed to catch and fix technical issues. Simply put, a voice AI agent is only as good as the underlying tech performance; downtime or bugs can disrupt service, so IT teams need to treat it as mission-critical software.

- Integration with Legacy Systems: Many call centers have complex workflows and legacy software (CRM, ticketing, databases). Integrating the AI agent with all these systems can be challenging. For the AI to be truly effective, it should pull up customer data, log interactions, maybe create support tickets or update order statuses in real time. Building those integrations via APIs or middleware can be time-consuming. There may be compatibility issues – not all old systems were designed to interface with AI services. This can lead to partial deployments where the AI can handle conversation but not actually complete a task because it can’t interface with a backend system (e.g., it can’t issue a refund because there’s no API to the billing system), leading to a broken experience. Solving this might involve upgrading backend systems or using RPA (Robotic Process Automation) bots as a bridge, but those add complexity. Integration was cited as a common obstacle in enterprise AI rollouts. Additionally, call routing logic gets more complicated in a human+AI hybrid environment – deciding when to transfer to a person, how to share context, etc., needs thoughtful design. Companies like Callin.io specialize in helping navigate these issues with middleware solutions, but it remains a non-trivial part of any voice AI project.

- Training and Tuning Effort: While an AI agent doesn’t require the same training as a human, it does require configuring and tuning – essentially “teaching” it the business knowledge. This involves feeding it FAQs, conversation examples, and defining how it should respond in various scenarios. Setting up a high-quality voice AI is a project that can take weeks or months. If using an LLM, guardrails and prompts need to be engineered to keep it on track (so it doesn’t give irrelevant answers). If using an intent-based system, you have to design all the dialogue flows. As the business changes (new products, new policies), the AI needs updates. There is also a learning curve for staff to supervise or maintain the AI – they may need to learn new tools or analytics to refine the system. If not enough resources are dedicated to this ongoing improvement, the AI’s performance could stagnate or degrade. Essentially, the AI is like a new team member that requires onboarding and continuous coaching – except that coaching comes in the form of data training and software updates. Companies sometimes underestimate this and treat the AI as “set and forget,” which can lead to poor results. Properly tuning language models or updating knowledge bases is critical to keep responses accurate and contextually relevant.

- Customer Acceptance and Trust: Not all customers are immediately comfortable interacting with an AI, especially by voice. Some may feel frustrated talking to a “machine” if they suspect it’s not human, particularly if they’ve had bad experiences with dumb IVRs in the past. Others might have trust issues – for example, will the AI understand a complex billing dispute or will it just waste their time? There can also be generational differences: younger customers might be more at ease with AI, whereas older customers might prefer a human touch. Additionally, if the AI’s voice is too robotic or the conversation flow is stilted, customers will notice and may lose confidence. On the flip side, if the AI’s voice is too human-like and the customer isn’t informed it’s AI, they may feel deceived when they eventually realize it’s not a person. Managing customer perception is thus a challenge. Many companies address this by being transparent: e.g., the AI might introduce itself, “Hi, I’m an automated assistant, how can I help you today?” This sets expectations. Some customers will opt-out immediately (“I want to talk to a human”), which the system should allow gracefully. Over time, as voice AI proves itself useful, customer trust builds. But in the interim, businesses should monitor customer satisfaction closely and ensure there’s an easy path to a live agent for those who want it.

- Privacy and Security Concerns: Voice AI agents must handle sensitive data carefully. Calls often involve personal info, account details, maybe credit card numbers or health information. Recording and processing this data invokes data protection regulations. Companies need to ensure that voice data and transcripts are stored securely (often encrypted) and that they comply with laws like GDPR for handling personally identifiable information. There’s also the issue of customer consent – traditionally, customers know calls with humans may be recorded “for quality purposes.” With AI, should customers be explicitly told that an AI is listening/handling their data? (Regulators are indeed looking at requiring disclosure – more on that in the next section.) Additionally, biometric voice data could be considered sensitive (if the system does speaker identification or emotion detection). From a security standpoint, the AI should be locked down so it doesn’t accidentally leak data to the wrong person. For instance, it should verify identity before giving out account info, just as a human would. Designing AI to follow security verification steps is crucial, otherwise it might be tricked into revealing information it shouldn’t. Ensuring compliance and robust security is a non-negotiable challenge especially in industries like finance or healthcare. This can sometimes slow deployments, as legal and compliance teams vet the AI thoroughly.

- Dependence on Data and Maintenance: Voice AI systems are as good as the data and logic behind them. They can become outdated – for example, if your company changes a policy or product, the AI won’t automatically know unless updated. This requires diligent content management. Also, the AI’s performance can drift if not periodically reviewed. Continuous improvement cycles are needed: analyzing where the AI failed or where customers got frustrated and then refining the system. If the initial training data didn’t cover a scenario (say, a pandemic occurs and people suddenly ask new questions), the AI has to be adjusted. Humans are more adaptable in unforeseen scenarios, whereas AI needs explicit tuning. Thus, maintaining a voice AI agent is an ongoing responsibility, not a one-time installation. Businesses should be prepared to treat it as a “program” that has owners, KPIs, and regular updates. Skimping on this will result in an AI that might provide wrong answers or become less effective over time, negating its benefits.

In summary, deploying voice AI in call centers is rewarding but not trivial. Common pitfalls include underestimating the effort to integrate and train the AI, and overestimating the AI’s ability to handle complex human interaction nuances. Organizations have to strike the right balance – using AI for what it’s good at, and not pushing it beyond its limits. Often the best approach is a hybrid model: let AI handle what it can, and smoothly involve human agents for the rest. As one expert noted, the most successful implementations “embrace a collaborative model rather than a replacement approach,” with AI and humans each playing to their strengths.

By acknowledging these challenges – accent recognition issues, empathy gaps, technical hiccups, etc. – companies can proactively mitigate them. For example, some are working on emotion detection algorithms in voice AI to help the system sense when a customer is upset and either modify its tone or expedite a human handoff. Others invest in accent-specific training data or even use voice transformation technology to normalize accents (though that raises its own ethical questions). It’s also critical to set realistic expectations internally: success will come from iterative improvement and learning from failures (just as call center managers continuously train humans, the AI needs ongoing “training” too, albeit in a different form).

Future Trends and Innovations

Voice AI for customer service is advancing rapidly. As we look to the future, we can anticipate several exciting trends and innovations that will further enhance what AI agents can do and how they integrate into the broader customer experience. Here are some key developments on the horizon:

- Emotionally Intelligent AI (Emotion-Aware Voice Agents): One area of active research is infusing AI with better emotional intelligence. Future voice AI agents are likely to get better at detecting customer emotions from voice signals – for instance, recognizing stress, anger, or confusion from the customer’s tone, volume, and pace. Speech Emotion Recognition (SER) technology uses algorithms to classify emotions (joy, anger, sadness, etc.) from audio. With improved accuracy, an AI agent could dynamically adjust its responses based on the customer’s emotional state. For example, if the system detects the caller is angry or upset, it might switch to a calmer, more empathetic tone and prioritize quickly resolving the issue (or escalate to a human sooner). It may even inject soothing phrases like “I’m really sorry about this inconvenience” more liberally when it senses frustration. On the flip side, if a customer is cheerful or cracking jokes, the AI might respond in kind with a bit of levity, making the interaction feel more human. Emotion-aware voice AI could also help in deciding how far to push automation – an upset customer might be directly handed to a human, whereas a neutral/happy customer could continue with the AI. Beyond detection, there’s also work on AI expressing emotion. Current TTS voices are largely neutral or mildly upbeat. In the future, we may have AI voices that can express concern, enthusiasm, or sympathy on cue. This requires training TTS models on emotional speech data so they can convey subtle changes in tone. While true empathy is far off, these advancements aim to make AI conversations feel more emotionally aligned with the customer’s needs, thereby improving comfort and satisfaction.

- Multimodal and Omnichannel AI Agents: The future of customer service AI is not limited to voice alone – it will be multimodal. This means AI agents that can seamlessly operate across voice, chat, email, and even video, providing a unified experience. A customer might start talking to a voice AI on the phone, then receive a text with a verification code, and maybe even get an email summary after the call – all orchestrated by the same AI agent behind the scenes. We’re likely to see voice agents that can send visual content to customers’ smartphones during a call (e.g., the AI says “I’ve sent a link to your phone with the details we discussed”). Conversely, if a customer is on a web chat and it becomes complex, the AI could say “Would you like me to call you to resolve this?” and then place a call. The lines between channels will blur. Multimodal LLMs (which can process text, voice, images) will enable a single AI model to handle inputs and outputs in various forms. In call centers, this could translate to an AI that during a voice call can also interpret a photo the customer sends (maybe a picture of a defective product) or guide a customer on a website simultaneously. Additionally, as video calling grows, we might even see AI avatars or “digital humans” – a visual representation of an AI agent (perhaps a friendly looking virtual person on screen) that can talk and show facial expressions. While still in early stages, several companies are working on lifelike avatars combined with voice AI to create an interactive support persona for video calls or kiosk support. By being multimodal, future AI agents will provide help wherever the customer is, in whatever form is most effective, ensuring consistency across all touchpoints.

- Deeper CRM Integration and Personalization: In the future, voice AI agents will likely be even more tightly integrated with customer databases, CRM systems, and contextual data. This means even greater personalization and proactive service. For example, the AI might know that a customer recently added an item to their online cart but didn’t complete the purchase; if that customer calls in, the AI could preemptively ask if they need help with that product. Or if a customer has an open support ticket, the AI greets them with “Hi [Name], are you calling about your existing case regarding [issue]?” and already has all the context loaded. Integration with CRM will also enable predictive service – the AI can anticipate why someone might be calling based on account activity. For instance, if a user’s flight was just canceled, and they call, the AI can start with “I see your flight was canceled. Would you like to rebook on another flight or get a refund?” rather than making the customer explain the situation. This level of context awareness can turn a potentially frustrating call into a surprisingly smooth experience. Moreover, AI agents will update CRM records in real time, so after an AI-handled call, the account notes will be automatically populated with the interaction summary and outcomes. Sales and support teams could then get alerts or follow-ups generated by AI (e.g., if the AI detects a sales opportunity or a lead, it can notify a human salesperson to follow up). Proactive outreach is another aspect – future voice AI might even initiate calls to customers based on triggers (like reminding someone of an expiring subscription and offering renewal). All of this requires robust back-end integration, but as APIs become standard and companies prioritize unified customer data, AI agents will act with full knowledge of the customer journey, making interactions highly personalized and efficient.

- Advanced Learning and Adaptation: Future AI agents will learn and adapt much more quickly from interactions. We can expect continuous self-learning systems (with proper oversight) where the AI refines its models based on call transcripts and outcomes. If the AI encountered a question it couldn’t handle and a human later resolved it, the AI could learn from that resolution to handle similar queries next time. This might involve reinforcement learning where successful outcomes are used to tweak the AI’s decision policies. Additionally, faster training cycles will allow AI to be updated with new information almost in real-time. For example, if there’s a sudden issue (like a widespread outage affecting many customers), the AI could be quickly fed a new “knowledge pack” about that issue and how to respond, enabling it to handle calls about that event within minutes of it starting. This agility would surpass the ability to train human agents en masse on breaking news. Moreover, as foundation models (like large language models) get larger and more sophisticated, they inherently bring more “world knowledge” and reasoning ability, which the voice AI can tap into. That means fewer scenarios will stump the AI because it can draw on a massive knowledge base. We might reach a point where the AI agent has read all of a company’s documentation and beyond – it could even use the internet in real-time to find answers (with proper controls). Imagine an AI that, if asked a question outside its preset knowledge, can safely search an internal wiki or public FAQ site and generate an answer on the fly. We are heading toward that direction with generative AI.

- Hybrid Human-AI Collaboration Improvements: Rather than AI replacing humans outright, a future trend is refining how AI and humans collaborate in the customer service process. This includes better live agent assist tools – while the AI talks to the customer, a parallel AI could be advising a human supervisor or ready to step in. Or if a human agent takes over a call from the AI, the AI might stay on the line in a “whisper mode” to feed the human real-time suggestions, relevant knowledge articles, or even live transcription translation if the agent and caller speak different languages. Essentially, the AI can act as a co-pilot for human agents. We already see early versions of this: some systems provide agents with real-time sentiment analysis and guidance (e.g., prompting the agent to show empathy if the customer sounds upset). In the future, these will be more sophisticated, with AI analyzing the conversation semantics and offering on-screen recommendations, filling out forms automatically as the agent and customer talk, etc. Another angle is swarm AI – multiple AI “specialists” that can be consulted during a call. For example, a general voice AI might hand off a sub-task to another AI specialized in troubleshooting internet connectivity, which then returns an answer to the main AI, all within one call. This kind of behind-the-scenes AI tag-teaming could resolve complex issues faster without human involvement, or with only minimal human supervision. The net effect is that future contact centers might operate with AI as the front-line and humans as strategists/problem-solvers monitoring many AI interactions at once. One agent might oversee 5–10 AI calls simultaneously, intervening only when necessary – a force multiplier model that could greatly increase productivity.

- Voice Biometrics and Security Enhancements: Security will be bolstered by voice AI through voice biometrics – using the customer’s voice itself as an authentication factor. Already, some banks use voiceprint technology in their IVRs to verify identity (“Your voice is your password”). In the future, integrating this into voice AI agents can make authentication both seamless and highly secure. The AI can recognize a caller by voice within seconds of speech, potentially eliminating the need for security Q&As or PINs for returning customers (once they’ve enrolled a voiceprint). Additionally, advanced fraud detection AI will listen for signs of scam or impostor calls – for example, detecting if someone is using a synthesized voice or if the calling pattern matches known fraud behaviors, and then take appropriate action (like routing to a special human team or requiring additional verification). On the flip side, AI will help prevent fraud against customers, too – e.g., if a scammer calls a customer pretending to be support, future systems might allow customers to verify an AI’s identity or have the AI verify the human. This gets into broader telecom security, but the gist is that AI will play a role in ensuring trust in voice communications, using techniques like blockchain records of AI interactions or validated AI voice signatures, etc.

- Regulatory Compliance Built-in: Given the regulatory considerations (discussed next), future voice AI solutions will likely have compliance features by default. For instance, an AI could automatically announce itself as AI at the start of calls to meet disclosure requirements. It could also enforce compliance scripts every time (never forgetting to say the mini-Miranda in a debt collection call, for example). We may see AI that is certified for certain standards – e.g., an AI call center agent that is HIPAA-certified for healthcare calls or PCI-compliant for handling credit card info, with all the requisite encryption and logging. As regulators define rules for AI usage, vendors will build those into the platforms so companies can toggle settings to ensure adherence. Additionally, explainability and logging will improve – future AI might be able to produce a reason for its responses or actions, which could be important for audits (or even just debugging why it responded a certain way). Overall, the maturation of the technology will involve not just making the AI more capable, but also more controllable, transparent, and compliant for enterprise use.

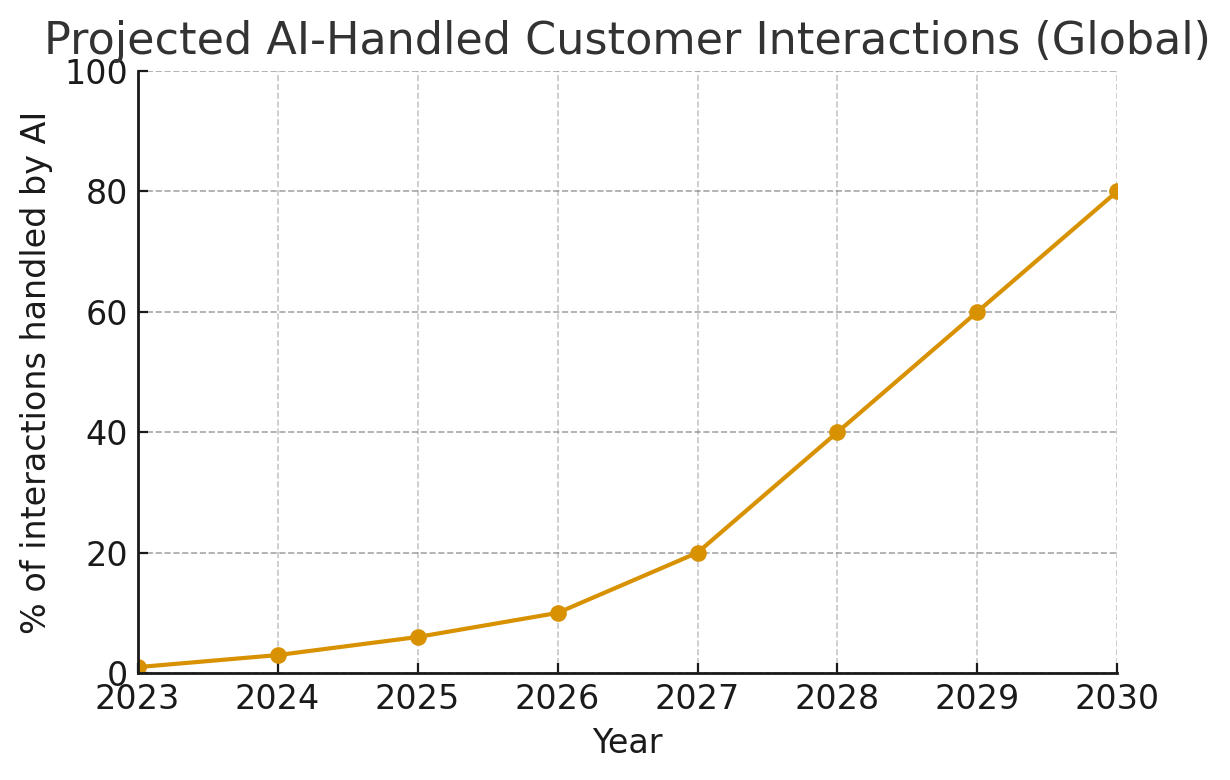

Looking ahead 5 to 10 years, it’s plausible that voice AI agents will handle the majority of routine customer interactions across many industries. Gartner predicts that by 2030, as much as 80% of customer service interactions could be handled by AI without a human. The trend line is steep:

Projected percentage of customer interactions handled by AI (without a human agent) globally, based on industry forecasts. By 2026 roughly 10% of interactions are expected to be AI-handled, growing to an estimated 80% by 2030.

As the chart suggests, we are just at the beginning of that curve. Voice AI’s capabilities are increasing rapidly thanks to advancements in AI research. Generative AI, larger conversational models, and specialized speech models are converging to make AI agents far more human-like in both understanding and speaking. We can expect interactions with AI to feel even more natural in the near future – to the point that perhaps customers won’t be able to tell (and won’t care) if it’s a bot or human, as long as their issue is resolved efficiently.

Another future consideration is the ethics and societal impact – as AI takes on more of the workload, the nature of call center jobs will change. We might see new roles like “AI interaction designer” or “AI supervisor” become standard in call centers. Companies will likely reposition many frontline staff into roles focused on managing AI or handling only escalations. In essence, the call center of the future could have a much smaller human staff, but each handling more complex tasks that AIs can’t (yet) do, with AI doing the heavy lifting of volume.

In terms of innovation, we should also keep an eye on emerging modalities – for instance, integration of voice AI with IoT devices (imagine contacting customer support through your smart speaker and an AI agent helping you), or in-car voice assistants connecting to customer service while you drive. The barrier between personal voice assistants (like Siri/Alexa) and customer service agents may blur – you might ask Alexa to contact customer support for you, and Alexa’s AI coordinates with the company’s AI agent to resolve your issue, possibly without you ever talking to a human or even directly to the company’s bot.

In summary, the future of voice AI agents in call centers is one of more intelligence, more integration, and more human-like interactions. They will not only respond to customer needs but anticipate them, and they’ll operate across various channels and devices. Companies that leverage these innovations will be able to offer an always-on, personalized support experience that would have been science fiction a decade ago. It’s an exciting frontier, but as we embrace it, we must also navigate the ethical and regulatory landscape to use this technology responsibly.

Conclusion

Voice AI agents with human-like voices are rapidly transforming customer call centers, bringing both exciting opportunities and new responsibilities. As we’ve discussed, these AI-driven virtual agents combine advanced speech recognition, natural language understanding, and realistic speech synthesis to engage customers in natural conversation. They can scale on-demand, work tirelessly 24/7, and consistently follow best practices – all while significantly reducing operational costs. For call center managers, this promises a way to handle ever-growing contact volumes without proportional increases in headcount, to cut average handling times, and to deliver quick answers to customers even at 3 AM. For AI researchers and technologists, the call center is a rich playground for applied AI, showcasing how breakthroughs in ASR, NLP, and LLMs can solve real business problems and enhance user experiences.

We saw how companies like WEOKIE Credit Union achieved tangible gains (50% shorter wait times, higher satisfaction) by deploying voice AI, and how multiple banks are automating a third or more of their calls with AI. These early case studies illuminate the benefits: round-the-clock availability, the ability to handle surges, uniform service delivery, and freeing humans for complex cases. Customers are starting to embrace these AI helpers – especially as they become more life-like and capable – with the majority in some surveys saying voice AI has improved their phone experience. In many scenarios, a well-designed AI agent can resolve issues faster than waiting for a human, which makes for happier customers.

However, we also delved into the challenges and limitations. Voice AI agents are not a panacea. They currently do best with routine, structured interactions and may falter when conversations go off the beaten path. They lack genuine empathy and can misinterpret people with heavy accents or atypical speech. Technical glitches or integration woes can also hinder their effectiveness. These are important reminders that human oversight and backup are still crucial. In practice, the most effective use of voice AI is in a hybrid model where AI handles what it can and seamlessly hands off to human agents when needed. This synergy plays to the strengths of each – AI’s speed and consistency, human’s empathy and creativity.

Looking ahead, the trend lines indicate that voice AI will grow more powerful and prevalent. Innovations on the horizon – from emotion-aware AI that can sense and adapt to a caller’s mood, to multimodal agents that fluidly transition between voice and text, to ever-improving language models – will push the frontier of what AI agents can handle. It’s plausible that in the coming years, AI voice agents will become the first point of contact in most service interactions, with humans mostly handling escalations or special cases. This doesn’t diminish the human role, but it does change it. Agents of the future may supervise fleets of AI interactions, focusing their expertise where it’s truly needed. Meanwhile, customers might rarely face a busy signal or long hold again – an AI will always be available to greet them.

References

- Conversational AI Reduces Contact Center Costs

https://www.gartner.com/en/newsroom/press-releases/2023-05-09-gartner-says-conversational-ai-platforms-reduce-contact-center-costs - WEOKIE Credit Union Case Study

https://interface.ai/customer-stories/weokie-credit-union - CX Trends Report

https://www.zendesk.com/blog/customer-experience-trends/ - What Are Voice AI Agents?

https://www.callin.io/blog/voice-ai-agents - Building Conversational IVRs with Voice AI

https://www.twilio.com/blog/conversational-ivr-ai-voice-bots - Building Voice AI Applications with NeMo

https://developer.nvidia.com/blog/building-voice-ai-applications-using-nemo/ - Contact Center AI Overview

https://cloud.google.com/solutions/contact-center - The Rise of Intelligent Virtual Agents

https://www.five9.com/blog/what-are-intelligent-virtual-agents - Amazon Lex in Call Centers

https://aws.amazon.com/lex/ - Bias in Speech Recognition

https://www.speechmatics.com/resources/blog/how-to-reduce-bias-in-speech-recognition - Ethics in AI for Customer Service

https://www.ibm.com/blogs/policy/ethics-artificial-intelligence-customer-service/ - AI Customer Service Assistant Announcement

https://www.klarna.com/international/press/klarna-introduces-ai-customer-service-assistant/ - Erica Virtual Assistant Milestone

https://newsroom.bankofamerica.com/content/newsroom/press-releases/2022/04/erica-virtual-assistant.html - Voice AI Deployment Across Credit Unions

https://eltropy.com/resources/blog/voice-banking-ai-agent-launches-across-25-institutions/ - AI-Generated Voice Call Rules Proposal

https://www.fcc.gov/document/fcc-takes-action-against-ai-generated-voice-calls