Gemini 2.5 Pro: Redefining Enterprise AI with Unmatched Productivity and Security

Enterprise leaders are increasingly turning to advanced AI solutions to boost productivity while maintaining stringent security standards. Google’s Gemini 2.5 Pro stands out as a next-generation enterprise AI model that combines cutting-edge performance with enterprise-grade security and compliance. Announced in March 2025 as Google’s “most intelligent AI model”, Gemini 2.5 Pro brings an innovative “thinking” architecture that enables transparent reasoning, multimodal understanding, and massive context handling. Designed for complex, real-world tasks, it tops industry benchmarks and debuts at #1 on the LM Arena leaderboard by a significant margin. This blog post provides a comprehensive overview of Gemini 2.5 Pro’s architecture, enterprise-focused features, and deployment model, with a focus on how it enhances productivity and security in enterprise environments. We also compare Gemini 2.5 Pro to other leading enterprise AI tools – including Microsoft’s Copilot, Anthropic’s Claude 3, and OpenAI’s GPT-4 – and highlight use cases across finance, healthcare, manufacturing, and government sectors.

Enterprises today demand AI solutions that not only deliver superior capabilities but also integrate safely into their workflows. Gemini 2.5 Pro meets this demand by offering state-of-the-art reasoning and coding abilities alongside robust privacy and security controls. In the following sections, we delve into Gemini’s technical architecture and features, examine real-world productivity gains, discuss security enhancements, and compare it with peer enterprise AI offerings. Two charts are included to illustrate Gemini 2.5 Pro’s performance against competitors and the productivity gains seen across industries, and a comparative table summarizes key differences in capabilities, security features, and deployment options. The tone is formal and analytic, aimed at IT leaders, tech-savvy readers, and security professionals seeking an in-depth understanding of how Gemini 2.5 Pro Enterprise AI can transform enterprise productivity and security.

Architecture and Design of Gemini 2.5 Pro

Gemini 2.5 Pro is built on an advanced large-model architecture that emphasizes “reasoning before responding.” Google describes Gemini 2.5 as a “thinking model” capable of internally working through a chain of thoughts to solve problems step-by-step. In practical terms, this means Gemini 2.5 Pro doesn’t just predict the next word – it actively reasons through the task, generating intermediate logic or calculations before producing a final answer. This architectural approach combines a significantly enhanced base language model with improved post-training techniques to imbue native reasoning capabilities. As a result, Gemini 2.5 Pro can analyze information, draw logical conclusions, and incorporate context and nuance in a way previous models struggled to achieve.

One hallmark of the architecture is transparent chain-of-thought reasoning. During Google’s training process, Gemini was optimized to show its work in a structured manner, yielding coherent step-by-step explanations rather than opaque answers. For example, when posed a complex analytical question, Gemini 2.5 Pro will often break down the solution into numbered steps or sub-tasks, logically working through each – a sharp contrast to many prior models that functioned as “black boxes.” This structured reasoning is not just academic; it greatly improves explainability and trust. Enterprise users can see how the model arrived at an answer and follow the internal logic, making it easier to validate and audit the outputs. This is a major architectural advancement from earlier LLMs like GPT-3 or initial GPT-4 versions, which did not expose their intermediate reasoning. By building in this “thinking” capability, Gemini 2.5 Pro sets a new bar for AI transparency and steerability, allowing users to correct or guide the model at each logical step if needed.

Another key aspect of Gemini 2.5’s design is its emphasis on multimodality and an extremely long context window. The model is inherently multimodal – it can natively accept and process not just text, but also images, code, and even other data formats (with support for audio or video inputs through transcripts or linking, per Google’s design). This means enterprise use cases that involve interpreting charts, reading design blueprints, analyzing screenshots, or writing code with graphical outputs can all be handled within a single AI system. For instance, Gemini 2.5 Pro can examine a diagram embedded in a document or the screenshot of an error log, reason about it, and incorporate that understanding into its textual answer – all in one seamless interaction. In one internal test, the model was able to read a technical article about search algorithms, generate an SVG flowchart illustrating the algorithm, then refine that flowchart after being shown the rendered image and noticing a mistake. This level of multimodal reasoning hints at an architecture where vision, text, and code understanding are deeply integrated, rather than bolted on as separate modules.

Perhaps most impressively, Gemini 2.5 Pro boasts a context window of up to 1 million tokens (and Google plans to extend this to 2 million). To put this in perspective, 1 million tokens is roughly equivalent to reading 750,000 words, or about the length of the first six Harry Potter books combined. In enterprise terms, this huge context window allows Gemini to ingest entire codebases, large knowledge bases, or years of corporate documents at once. The architecture required substantial innovation to handle such long sequences without losing coherence or incurring prohibitive computation costs. By leveraging optimized attention mechanisms and “flash” memory management (building on techniques from Gemini 2.0 Flash models), Gemini 2.5 Pro can maintain focus over lengthy inputs. This is a game-changer for enterprises: the model can take in an entire legal contract repository or a multi-hundred page annual report and reason over it in a single session, identifying relevant parts and drawing conclusions across the whole dataset. Traditional models with 4k, 32k, or even 100k token limits would require chopping the data into pieces, losing global context. With Gemini’s architecture, that limitation is vastly reduced.

Native support for extended reasoning and tool use is another architectural feature geared toward enterprise complexity. The model has been trained and evaluated not only on static Q&A or completion tasks, but also on “agentic” tasks where it needs to decide how to act (such as writing code that will be executed, or querying a tool for more data). For example, on the SWE-bench agentic coding benchmark (where models generate and execute code to solve problems), Gemini 2.5 Pro achieved a high score of 63.8%, only slightly behind an agent-optimized Claude variant. The architecture allows function calling and action planning within the model’s outputs, which enterprise developers can harness to integrate Gemini into automated workflows. In effect, Gemini 2.5 Pro can serve as the reasoning engine at the core of an AI agent – planning steps, calling APIs or database queries (via provided tools), and then synthesizing results – all while keeping the chain-of-thought transparent to the user.

In summary, Gemini 2.5 Pro’s architecture represents a unified AI model with built-in reasoning, multimodal understanding, and massive context integration. By “thinking before speaking,” it produces more accurate and reliable results. By handling images and code natively, it tackles tasks that span diverse data types. And with a million-token memory, it can comprehend and operate on the full breadth of enterprise knowledge without breaking context. These architectural advances directly support enterprise needs: they enable complex problem-solving, reduce the need for splitting tasks, and improve trust through transparency.

Enterprise-Focused Features and Deployment Model

Beyond raw technical prowess, Gemini 2.5 Pro is engineered with features and deployment options tailored for enterprise use. Google has clearly positioned this model as “enterprise-ready,” incorporating elements like robust security, compliance, manageability, and integration into corporate ecosystems.

Availability and Deployment: From day one, Gemini 2.5 Pro was made accessible to enterprise users through Google’s cloud platforms. It launched as an experimental model in Google AI Studio (the developer environment for Google’s Generative AI) and in the Gemini App for advanced users. More importantly, Google announced that Gemini 2.5 Pro will be integrated into Vertex AI – Google Cloud’s managed machine learning platform – enabling enterprises to access the model with high reliability and within their own cloud environment. This means organizations can use Gemini 2.5 Pro via APIs and client libraries, with the service running in Google’s secure cloud infrastructure and (optionally) within a private network context. In Vertex AI, companies will be able to choose regional deployments to meet data residency requirements, set usage quotas, and monitor operations through familiar Cloud dashboards. Google also indicated that pricing for scaled production use (with higher rate limits) will be introduced, highlighting a path for enterprises to move from experimentation to large-scale deployment. In essence, the deployment model mirrors what OpenAI and Anthropic have done via cloud APIs, but tightly integrated into Google’s enterprise cloud stack – a significant plus for companies already invested in Google Cloud services.

Security and Compliance Features: For enterprise adoption, one of the first questions is how an AI model handles data security and user privacy. Gemini 2.5 Pro benefits from Google’s extensive focus on AI responsibility and safety. All interactions with the model in Google’s cloud are encrypted in transit and at rest, and (as with Google Cloud’s policies) customer data is not used to train Google’s foundation models without permission. In fact, Google emphasizes that it builds models like Gemini using broad web data and curated datasets, and does not incorporate a company’s prompts or outputs into the model’s parameters. This approach is similar to OpenAI’s and Anthropic’s enterprise policies – for instance, OpenAI’s ChatGPT Enterprise also guarantees that business conversations are not used for training and is SOC 2 compliant. We can expect Gemini 2.5 Pro running on Vertex AI to come with SOC 2, ISO 27001, and other compliance certifications, as Vertex AI and Google Cloud services generally do. Additionally, Google has a robust data processing agreement and offers features like data logging controls, which enterprises can leverage to meet regulations like GDPR and HIPAA. In the healthcare context, for example, Vertex AI is HIPAA eligible, meaning Gemini 2.5 Pro could be used with protected health data when proper agreements are in place – a critical requirement for that industry.

A distinctive security advantage of Gemini’s design is the transparent reasoning we discussed earlier. From a risk management perspective, this feature is invaluable. It allows auditable AI output: compliance officers or internal reviewers can see the intermediate steps the model took, which helps in verifying that no sensitive data was leaked or that the reasoning followed approved policies. If Gemini 2.5 Pro is asked a question that touches on confidential information, an enterprise could require the model to output its chain-of-thought for review. Because that chain-of-thought is structured and coherent (not a jumble of hidden vectors), a human can inspect it to ensure compliance and then approve the final answer. This level of scrutiny is not possible with most other black-box models. In high-security environments like government or finance, such transparency adds a layer of trust and controllability to AI deployments.

Fine-Grained Access Control and Management: As an enterprise offering, Gemini 2.5 Pro will integrate with Google Cloud’s identity and access management. Organizations can expect to manage who or what systems can invoke the model via API keys or service accounts, enforce quotas, and monitor usage via Cloud Logging. Competing solutions have similar controls – for instance, Anthropic’s Claude Enterprise plan introduced SSO, role-based permissions, and admin console features like audit logs. We anticipate Google providing the same: administrators can likely provision Gemini 2.5 Pro access to specific teams, use organization policies to restrict certain types of content generation, and review logs of prompts if needed for compliance. In Microsoft’s Copilot offerings, the permissions model is built on Microsoft 365 tenant controls, ensuring data doesn’t leak across users and that only authorized information is accessed. Google’s equivalent would be leveraging Workspace and Cloud IAM – e.g. a company could integrate Gemini with Google Workspace such that it only answers questions using documents the requesting user has permission to view. (In fact, Google Workspace’s Duet AI features, which likely use Gemini on the back-end, already respect document permissions in this way.)

Integration with Enterprise Data: Another feature set critical to enterprise is how AI connects with internal knowledge bases and tools. On this front, Google’s strategy for Gemini involves tight integration with its cloud ecosystem. In Vertex AI, Gemini 2.5 Pro can be paired with enterprise knowledge connectors – for example, piping in data from BigQuery (for analytics), Cloud Search (for document retrieval), or third-party SaaS applications via API calls. Google has showcased “Agents for Enterprise” where a language model like Gemini is augmented with retrieval capabilities to access company data on the fly. The massive context window also simplifies integration – rather than building a complex retrieval pipeline, an enterprise could literally feed an entire knowledge base (or a very large chunk of it) directly into the model’s context and ask questions. This lowers the barrier to entry for prototyping AI assistants on proprietary data.

For software engineering use cases, Gemini’s integration is also noteworthy. Google has indicated that Gemini 2.5 Pro will support code-related tools and plugins. Anthropic’s Claude Enterprise, for instance, launched with a native GitHub integration allowing it to sync to repositories and work with codebases easily. We can expect Google to offer similar capabilities – perhaps connectors that allow Gemini to pull in code from Google Cloud Source Repositories or GitHub, enabling tasks like automated code review or refactoring with minimal setup. In internal tests, Gemini 2.5 Pro demonstrated it can handle entire codebases loaded into context, identifying necessary changes across dozens of files and implementing them coherently. Such integration means enterprises can use Gemini as a pair programmer that understands their whole project, not just a single file.

Responsible AI and Safety: Enterprise-focused AI must also minimize the risk of generating harmful or biased outputs. Google DeepMind has a dedicated Responsibility & Safety team, and Gemini 2.5 Pro benefits from those efforts. The model was likely trained with reinforcement learning from human feedback (RLHF) and “constitutional AI” principles similar to Anthropic’s approach, to ensure it follows guidelines. It was also evaluated on factuality – the benchmark shows Gemini did quite well on factual Q&A (SimpleQA) with a score of 52.9%, though interestingly an OpenAI model variant scored even higher at 62.5%. The open transparency of reasoning helps here too: if Gemini begins to stray into an unwanted direction, that is visible. Google will also incorporate content filtering in the Vertex AI endpoint (as they do for other models), blocking or redacting disallowed content in both prompts and responses. Microsoft’s Copilot similarly has an orchestration where prompts/responses are checked by Azure AI Content Safety system to block things like profanity, hate speech, or data leaks, before final output. We can be confident Gemini 2.5 Pro in enterprise settings has analogous safeguards. These safety features give security professionals confidence that deploying the model won’t introduce new risks or regulatory violations.

In terms of deployment flexibility, at present Gemini 2.5 Pro is a cloud-hosted solution (Google has not released it for on-premise or offline use). However, Google Cloud offers Virtual Private Cloud (VPC) access for services like Vertex AI, meaning enterprises can invoke Gemini from within a private network without exposing traffic to the public internet. This essentially creates an isolated, secure environment for using the model – a critical requirement for sectors like government or finance. While some organizations prefer on-prem models for absolute control, the complexity of running a model of this size (likely hundreds of billions of parameters) and the need for specialized hardware make on-prem deployment impractical in most cases. Instead, Google might in the future offer Google Distributed Cloud options for Gemini (allowing the service to run in a customer-managed infrastructure or specific sovereign cloud regions). But even without that, current deployment via Google Cloud covers most enterprise needs with strong security guarantees.

To summarize this section: Gemini 2.5 Pro Enterprise AI comes readily accessible through Google’s cloud with enterprise-grade security, privacy, and manageability. Data stays protected (no training on customer data, encryption at rest/in-transit), administrators have control over usage and access, and the model can integrate with corporate data sources to truly become a part of the enterprise workflow. This thoughtful alignment of the technology with enterprise IT requirements is what transforms Gemini 2.5 Pro from just an impressive model into a practical enterprise AI solution.

Benchmark Performance: Gemini 2.5 Pro vs. Competitors

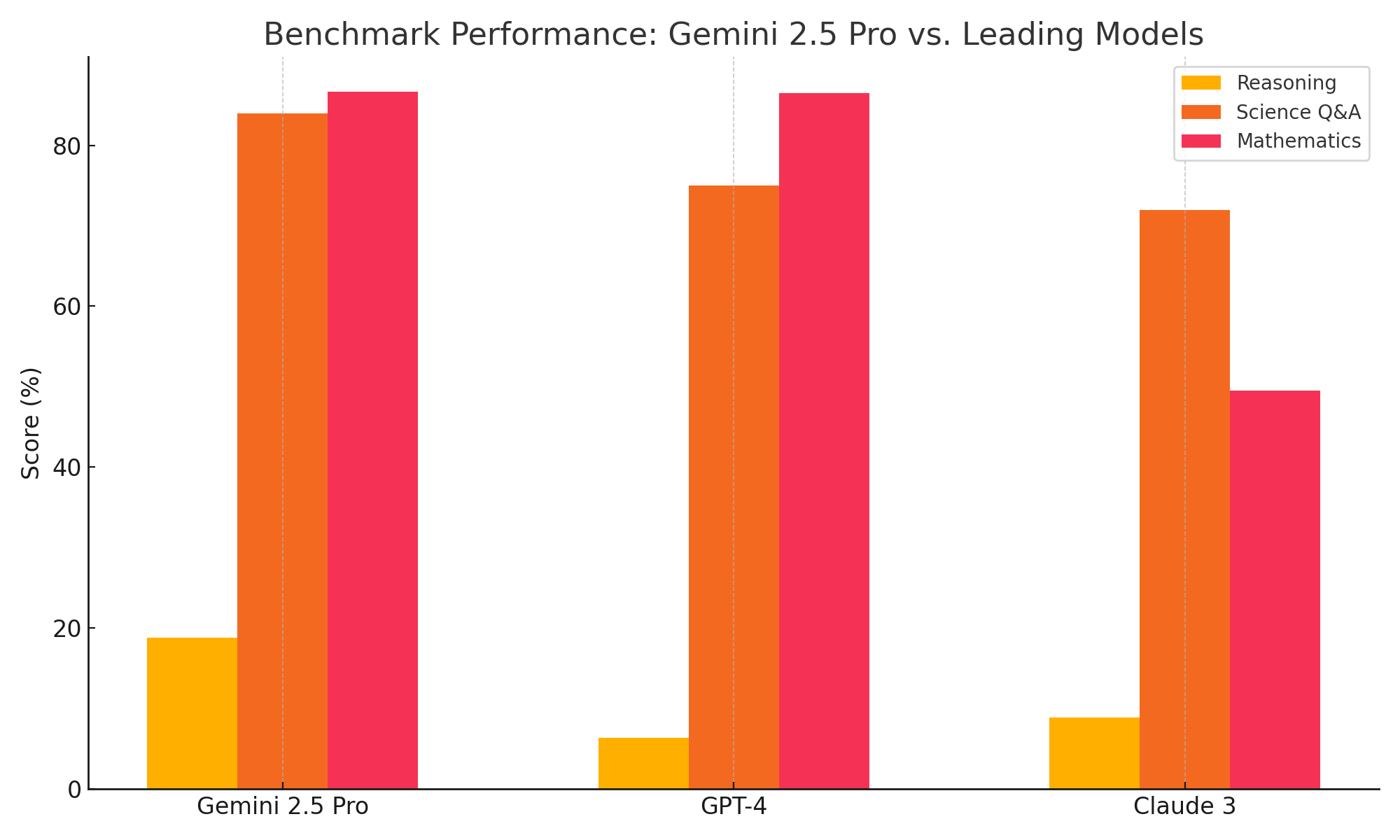

Gemini 2.5 Pro’s arrival has reshaped the competitive landscape of enterprise AI by establishing new performance highs in multiple domains. Google’s model was designed to excel in complex reasoning, coding, and knowledge tasks, and benchmark results confirm it outpaces many rival models from OpenAI and Anthropic on these fronts. In this section, we compare Gemini 2.5 Pro’s performance to that of other leading AI models – notably OpenAI’s GPT-4 and Anthropic’s Claude 3 – to illustrate where it leads and where the gaps are closing. A chart summarizing performance on key benchmarks (Reasoning, Scientific Q&A, and Mathematics) is included below for a visual comparison.

Gemini 2.5 Pro has been evaluated on a battery of academic and practical benchmarks. On advanced reasoning tests, such as Humanity’s Last Exam (a challenging evaluation spanning thousands of questions across domains), Gemini 2.5 Pro set a new state-of-the-art with a score of 18.8% (without using external tools). This may sound like a low percentage, but the test is extremely difficult – the score significantly exceeds OpenAI’s latest GPT-4.5 model (6.4%) and Anthropic’s Claude 3.7 (8.9%) on the same evaluation. In fact, at the time of release, Gemini 2.5 Pro led the next best model by a large margin on this human knowledge frontier test. The chart for “Reasoning” below reflects this, showing Gemini’s score roughly double that of the nearest competitor in that category.

On scientific and technical QA benchmarks, the pattern continues. Gemini 2.5 Pro scored 84.0% on the GPQA science benchmark (a graduate-level physics and science question set), outperforming both GPT-4 and Claude which scored in the 70s range. This indicates a superior ability to handle factual knowledge and multi-step scientific reasoning. The “Science” category in the chart illustrates Gemini’s edge in accuracy on science Q&A over its peers. Google achieved these results without resorting to test-time boosting techniques like majority voting or chain ensembling. (By contrast, some competitors apply such techniques to bump up scores at greater computational cost – for example, Anthropic’s extended “Claude 3.7 Sonnet” used multiple attempts to slightly improve its GPQA score to ~84%, still tying or trailing Gemini.)

Mathematics and coding are often the Achilles’ heel of language models, but Gemini 2.5 Pro demonstrates top-tier performance here as well. On the AIME 2025 math competition problems, Gemini achieved 86.7% accuracy on first attempt, essentially matching the best OpenAI model (which scored 86.5%) and vastly exceeding Claude 3.7’s 49.5%. Moreover, on an earlier math test (AIME 2024), Gemini reached 92% – showcasing its strength in logical problem solving. This is notable because math tasks benefit greatly from the “chain-of-thought” approach; Gemini’s built-in stepwise reasoning gives it a clear advantage in tackling complex math without external tools. The “Mathematics” bar in the chart shows Gemini neck-and-neck with OpenAI’s model at the top, leaving Claude far behind in this domain.

Performance on Key Benchmarks – Gemini 2.5 Pro vs. Leading Models. Gemini 2.5 Pro leads in complex Reasoning tasks (Humanity’s Last Exam) and Science QA (GPQA), and is on par for Mathematics (AIME). Higher scores indicate better performance (accuracy or problem-solving rate). Competitors shown are approximate equivalents of OpenAI GPT-4 and Anthropic Claude 3. Figures are drawn from published benchmark results.

As the chart and data suggest, Gemini 2.5 Pro has set a new state-of-the-art in many areas relevant to enterprise AI. It’s worth noting that these benchmarks correlate to real-world tasks: “Reasoning” might correspond to how well the model can analyze a complex scenario or draft a strategic report; “Science/Knowledge” to how accurately it can answer technical questions or summarize research; and “Mathematics/Problem-Solving” to how effectively it can handle planning, scheduling, or performing data analytics. In all these, Gemini shows a clear performance edge or parity with the best.

It’s not just academic: on code benchmarks, Gemini 2.5 Pro also shines. Google reported that on LiveCodeBench (an evaluation of code generation on live coding challenges), Gemini achieved 70.4% pass@1 (solving on first attempt), which is in line with or slightly below one OpenAI model variant (~74%) but likely ahead of others not reported. On Aider Polyglot (a code editing task across multiple programming languages), Gemini scored 68.6% (whole-file edit success), significantly beating other top-tier models (the next best, OpenAI’s, scored 60.4% on a comparable measure). These results confirm that Gemini 2.5 Pro isn’t just a natural language reasoner – it’s a potent coding assistant as well, capable of understanding and modifying complex codebases.

Interestingly, one area where a competitor topped Gemini was an “agentic coding” benchmark (SWE-bench Verified) that tests how well the model performs when it can act as an agent. There, Anthropic’s Claude 3.7 had about 70.3% vs Gemini’s 63.8%. This can likely be attributed to Claude’s longer experience or fine-tuning in tool use scenarios and its constitutional AI that keeps it on track. However, given Gemini’s rapid progress and its inherent architecture for reasoning, we can expect that gap to close. Furthermore, in general coding situations requiring reading and writing large amounts of code, Gemini’s 1M token context gives it a huge practical advantage over Claude’s current 100K (or even 500K in the enterprise version) and OpenAI’s 32K context limits. A software engineer testing Gemini 2.5 Pro noted the model could modify 18 different source files across a project in one session, completing a new feature in 45 minutes – something not easily achievable with smaller context windows. Benchmarks may not fully capture such real-world productivity boosts that stem from handling more context at once.

In terms of multilingual ability and multimodal performance, Gemini also shows leadership. It scored nearly 90% on a global multilingual knowledge test (MMLU), indicating strong capabilities across languages. And on visual reasoning tasks (like interpreting images or charts), Gemini’s multimodal nature gave it an edge, scoring ~81.7% on MMMU (a multimodal reasoning test), outperforming GPT-4.5’s 74.4% and Claude’s 75.0%. Neither GPT-4’s vision nor Claude had been fully tested on some of these internal benchmarks at the time, but Gemini’s performance suggests it is at least competitive with the vision-enabled GPT-4 and ahead of others where they lack vision support.

It is also worth mentioning that Gemini 2.5 Pro currently sits atop the Chatbot Arena (LMArena) leaderboard by a notable Elo margin, meaning in head-to-head comparison by humans it’s preferred over other models. This reflects not only raw task accuracy but also the quality of responses (clarity, helpfulness, etc.). Early reactions from developers who tried Gemini 2.5 Pro highlight that it “feels like Google has the best models again”, a sentiment referencing Google’s leapfrogging of competitors in certain capability areas.

In summary, when comparing Gemini 2.5 Pro vs. GPT-4 vs. Claude 3 on core metrics, Gemini emerges as the leader in reasoning and many knowledge tasks, roughly tied at the top in coding and math, and strongly competitive in every area including vision and multilingualism. GPT-4 (especially in its latest improved versions) remains a formidable general model and still slightly edges out in some narrow metrics (like factual QA as noted), but Gemini’s rapid progress has closed the gap and even reversed it in reasoning transparency and context handling. Anthropic’s Claude 3, while excellent in high context and known for its safety, now appears to lag in pure performance on complex problem-solving – though it still holds strengths in cooperative dialogue and possibly some coding agent use. For enterprise decision-makers, these results indicate that adopting Gemini 2.5 Pro can give their teams a state-of-the-art tool, one that empirically outperforms or matches the best from OpenAI and Anthropic in the tasks that matter for productivity.

Enhancing Enterprise Productivity with Gemini 2.5 Pro

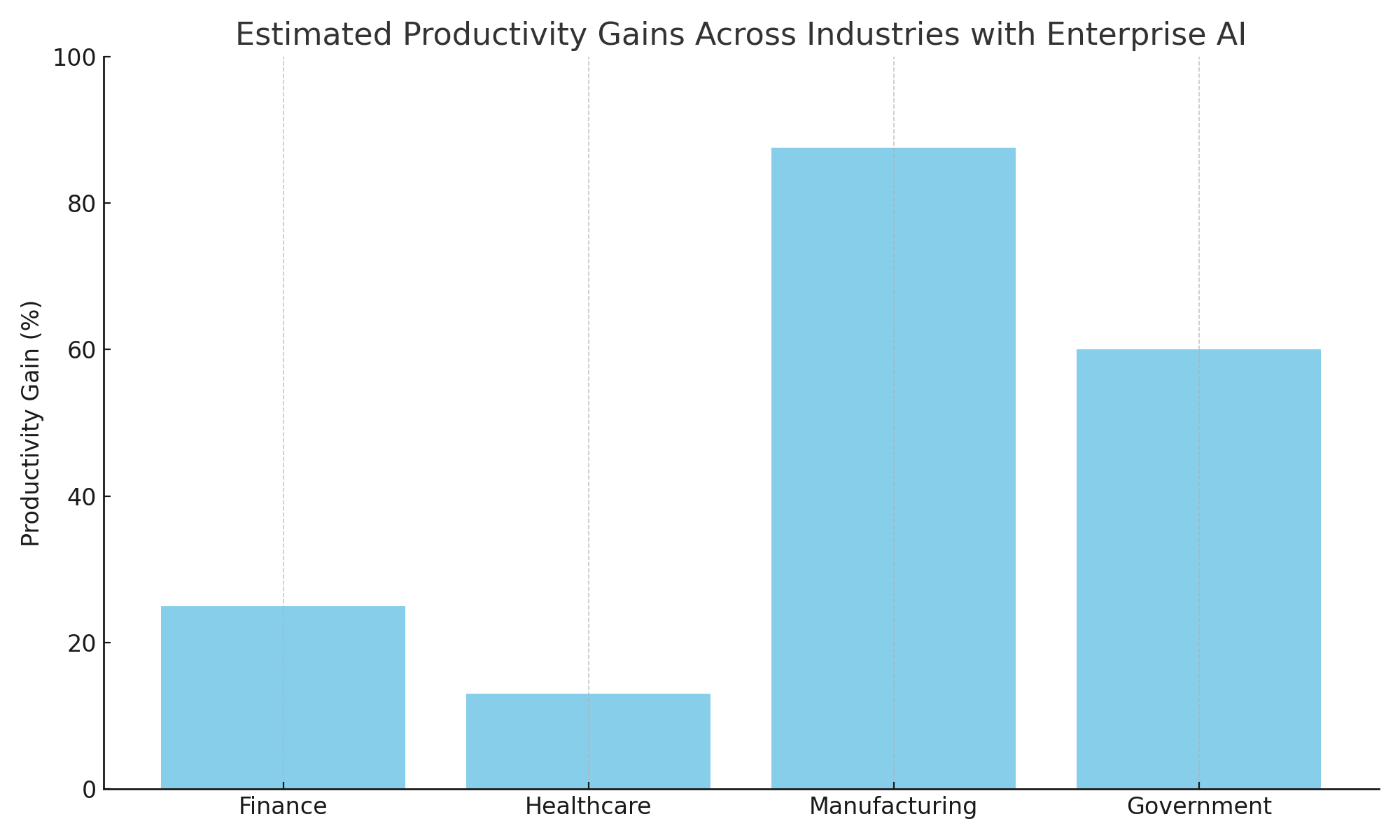

One of the most compelling reasons for enterprises to invest in AI like Gemini 2.5 Pro is the tangible productivity gains it can unlock across a variety of use cases. By automating routine tasks, assisting in complex work, and augmenting human capabilities, Gemini 2.5 Pro serves as a “force multiplier” for employees in different industries. In this section, we explore specific use cases in finance, healthcare, manufacturing, and government, illustrating how Gemini 2.5 Pro can streamline workflows and citing real-world examples of productivity improvements. A chart summarizing productivity gains across these sectors is included for quick reference.

Finance and Banking: In financial services, professionals deal with massive volumes of information – from market research and risk reports to compliance documents and client communications. Gemini 2.5 Pro can dramatically speed up these processes. For example, consider an investment analyst who needs to summarize quarterly earnings reports and extract key insights for dozens of companies. Instead of spending hours reading and taking notes, the analyst can have Gemini read all the reports (thanks to its large context window) and produce concise, accurate summaries with highlights of financial metrics and any forward-looking statements. The chain-of-thought reasoning allows the model to explain why certain metrics are important, increasing the analyst’s confidence in the summary. Similarly, in banking, Gemini can be used to analyze credit risk by collating data on a borrower from various documents and databases and providing a rationale for its risk assessment.

A concrete example of productivity improvement in this domain comes from insurance (a part of finance): one insurance provider integrated generative AI into their claims adjustment process and saw adjusters’ productivity increase by 25%, while errors dropped by 80%. This likely involved an AI (which could be akin to Gemini’s capabilities) helping to draft claim reports, check policy details automatically, or even generate recommendation letters for claim resolutions. For enterprise finance teams, similar gains are achievable. Routine tasks like generating portfolio reports, checking regulatory compliance across large texts, or drafting standardized communications can be automated. Auditing and compliance, often labor-intensive, can be accelerated as well – AI agents can scan through transactions or logs for anomalies in seconds rather than days. In fact, Google reported a case where an energy company’s safety audit process (analogous to a compliance audit in finance) was reduced from 14 days to one hour – a 99% time reduction – by using gen AI to automate the review of documents and forms. Financial audits and internal compliance reviews stand to benefit similarly from Gemini’s capabilities.

Healthcare: The healthcare industry is another area where Gemini 2.5 Pro can have a transformative impact. Clinicians and administrators face documentation burdens, from writing patient visit summaries and discharge notes to sifting through medical literature for the latest treatment guidelines. Gemini 2.5 Pro, with its specialized reasoning and summarization skills, can act as a tireless assistant. For instance, a doctor could use Gemini to automatically draft clinical notes after a patient appointment: the physician can input bullet points or a transcript of the encounter, and Gemini will generate a well-structured summary for the electronic health record, complete with the assessment and plan, in seconds. This directly translates to time saved on paperwork, allowing doctors to see more patients or focus on patient care. In a hospital setting, Gemini could also be employed to analyze large sets of patient data (while preserving privacy by operating within a secure environment) to identify trends or flag potential issues – such as scanning all ICU patients’ charts to find those that might be at risk of a certain complication, and explaining its reasoning for the flags it raises.

We already see real-world evidence of AI boosting healthcare productivity. One healthcare provider using Google’s generative AI in Workspace reported a 13% increase in productivity for their staff, as well as faster access to complex medical information through AI-driven summarization. Another case is MEDITECH, a health IT company, which integrated Gemini into its workflows and achieved an average of 7 hours saved per employee per week by automating tasks – effectively giving each employee almost a full workday back. Those 7 hours (out of a 40-hour week) represent nearly an 18% time savings, which is substantial in a healthcare context where staff are often overextended. The saved time can be redirected to direct patient care or to critical analysis that only humans can perform. In addition, by offloading repetitive tasks to AI, healthcare workers experience improved job satisfaction (as noted by MEDITECH) because they can focus more on strategic or interpersonal aspects of their job rather than paperwork. Gemini 2.5 Pro’s multimodal ability can also aid healthcare: for example, it can interpret medical images or charts when combined with vision models – a doctor could paste a snippet of an EKG or lab chart and ask Gemini for an analysis, and while it won’t make final diagnoses, it can speed up the recognition of patterns or anomalies, serving as a second pair of eyes.

Manufacturing and Industrial Operations: In manufacturing, time is money. Whether it’s on the factory floor or in the R&D department, the ability to quickly analyze data, troubleshoot issues, and optimize processes is crucial. Gemini 2.5 Pro can assist engineers and managers in numerous ways. One use case is maintenance and troubleshooting: manufacturing equipment often generates logs and error messages; Gemini can ingest weeks or months of log data (thanks to the long context window) and identify the sequence of events leading to a failure, even across multiple systems. It can then suggest likely causes and potential fixes, explaining the reasoning (which is vital for engineers to trust its suggestions). This turns what might be a multi-day root cause analysis into a much faster process. Another use case is process optimization: Gemini can take in operational data (yields, machine settings, throughput stats) and natural language descriptions of issues, and then provide recommendations for improving efficiency. Because it has been trained on a vast array of knowledge, it might surface a solution from a different context – for instance, applying a pattern from software optimization to a manufacturing workflow – that humans might not immediately see.

Real examples show staggering improvements. Enpal, a solar energy company, used a generative AI solution to automate part of its solar panel installation planning process – specifically, generating quotes for customers that involve assessing roof sizes and panel layouts. The result was an 87.5% reduction in time required, cutting what was a 2-hour manual process down to 15 minutes. This kind of acceleration can be attributed to AI’s ability to rapidly analyze aerial images of a roof (a vision task), cross-reference with product databases, perform calculations, and output a formatted proposal. With Gemini 2.5 Pro, such a task could be done even more interactively: the AI could generate a draft layout, then adjust it on the fly if the user says the customer wants to maximize output or minimize cost, etc., all while explaining the trade-offs. In a broader manufacturing context, tasks like quality control documentation can be expedited by AI as well. For example, after a production run, instead of writing a long report, an engineer can feed data and notes to Gemini and get a first draft of a QA report or incident report, which they then quickly refine. Companies like Bosch and Suzano (as noted by Google) have leveraged Gemini or similar models to streamline processes and data queries, achieving up to 95% reduction in query time for employees when accessing complex enterprise data via natural language. This means what used to take hours searching databases and reading manuals can now take seconds by asking Gemini, “What were last quarter’s downtime causes in Plant A vs Plant B?”

Government and Public Sector: Government agencies and public sector organizations are often information-heavy and resource-constrained, making them ripe for AI-driven productivity improvements. Use cases here include policy analysis, regulatory compliance, constituent services, and more. For example, a government legal team could use Gemini 2.5 Pro to analyze a new piece of legislation – the AI could summarize the key points, compare it to existing laws, and even highlight sections that might conflict with current regulations, all with citations and reasoning. This could save lawyers and analysts many hours of combing through legal texts. In public sector planning, if a city council is considering a new infrastructure project, Gemini could take in all the relevant reports, budgets, and community feedback, and produce a distilled briefing or even answer specific questions (“What were the top environmental concerns mentioned in public comments for Project X?”) which would allow officials to make informed decisions faster.

An area where generative AI has proven especially useful is in case management and social services. A notable case is Bayes Impact, a nonprofit working with social service providers: they implemented an AI assistant (with capabilities comparable to Gemini) to help caseworkers draft individualized action plans and manage cases. The result was that caseworkers saved 25 hours of work per week on average – essentially doubling their capacity to serve clients. In a government context, that means an employee who used to spend most of their week on paperwork can now dedicate more than half of that time to direct client interaction or strategic planning. Similarly, internal government audits and intelligence analysis can be sped up dramatically. A government audit that might require reading through thousands of procurement documents for irregularities can be handled by Gemini in a fraction of the time, flagging potential issues for a human auditor to review (with reasons for each flag). We already saw an enterprise analog: an energy company using AI saw an audit process go from 14 days to 1 hour. Government processes, often slower, stand to gain even more relatively. Another example is cybersecurity for public sector (which overlaps with security use cases we’ll cover next): Pfizer’s cybersecurity team, for instance, found that AI could aggregate threat data from multiple sources and cut analysis times “from days to seconds” – a critical improvement when responding to cyber incidents. This kind of speed can be life-saving or mission-critical in government operations as well (such as emergency response or intelligence threat assessments).

The chart below illustrates some of these productivity gains across industries, expressed as percentage improvements or time reductions, based on reported case studies and pilot implementations:

Estimated Productivity Gains Across Industries with Enterprise AI. Real-world implementations of generative AI (including Gemini 2.5 Pro and similar models) have led to significant productivity improvements: roughly 25% productivity increase in finance/insurance operations, about 13% in healthcare workflows, over 85% reduction in time for certain manufacturing tasks, and over 60% time savings in public sector case work. These figures highlight the transformative potential of AI assistants in various domains.

As shown, while the exact numbers vary by use case, the trend is clear: double-digit percentage improvements are common, and in process-intensive tasks, time reductions well beyond 50% are achievable. Even a modest 10-15% productivity boost enterprise-wide can translate to enormous value – imagine a corporation being able to accomplish what used to take 5 days in 4, effectively gaining an extra workday every week. Gemini 2.5 Pro makes these gains possible by efficiently handling the heavy lifting of reading, writing, and reasoning through information.

It’s important to note that these productivity gains go hand-in-hand with quality improvements when managed correctly. Because Gemini can show reasoning, employees can verify its work, leading to fewer errors (as the insurance example of 80% error reduction shows). In healthcare, using AI to draft documentation not only saves time but can also result in more consistent and thorough records, since the AI doesn’t forget to include pertinent details that a rushed human might miss. In manufacturing, faster analysis means downtime is minimized and products can be delivered sooner, improving service levels.

In conclusion, Gemini 2.5 Pro acts as an accelerator for enterprise workflows. By integrating it into various departments – be it an AI assistant that helps finance teams prepare reports, a chatbot that aids doctors in writing notes, an analysis tool for engineers, or a policy advisor for government staff – organizations can significantly reduce the time spent on information processing tasks. The end result is employees freed to focus on higher-level work and human judgment, with the AI taking care of the grunt work in the background. As the case studies and our chart depict, the boost in productivity and efficiency is not theoretical; it’s already being realized by early adopters across industries, painting a very promising picture for the widespread deployment of Gemini 2.5 Pro in enterprises.

Security, Privacy, and Trust Enhancements

For security professionals and IT risk managers, adopting an AI model like Gemini 2.5 Pro is not just about gains in capability – it’s equally about maintaining (or improving) the security posture of the organization. In this section, we delve deeper into how Gemini 2.5 Pro enhances security and trust, both by helping security teams in their mission and by ensuring the AI itself operates in a secure, compliant manner. We will also touch on a comparison with tools like Microsoft’s Security Copilot to understand the landscape.

AI as a Security Analyst’s Assistant: Modern cybersecurity operations generate overwhelming amounts of data – logs, alerts, incident reports, threat intelligence feeds, etc. Gemini 2.5 Pro’s strong reasoning abilities and large context capacity make it an excellent candidate to serve as a virtual security analyst. It can ingest enormous log files or alerts (for example, an entire day’s worth of network logs across a global enterprise) and identify patterns or anomalies that might indicate a cyber attack, doing so much faster than a human could. The key advantage is Gemini’s ability to correlate events: it might notice that a seemingly benign server error message, when combined with a pattern of failed logins and a subtle change in a configuration file, fits the profile of a known attack – and it can articulate this reasoning clearly. This is analogous to how a seasoned security analyst thinks, but Gemini can cross-reference far more data points simultaneously. Pfizer’s cybersecurity team, for instance, used generative AI to aggregate data from multiple sources and achieved analysis that used to take days in mere seconds. We can envision Gemini 2.5 Pro doing the same: compiling data from on-prem SIEM systems, cloud security dashboards, and threat intel reports, then summarizing the overall risk and highlighting urgent issues, effectively acting as a tier-1 analyst triaging incidents at machine speed.

Another use in security is threat hunting and research. Gemini can read through threat reports, vulnerability databases, and even dark web forum dumps (if provided) to help security teams stay ahead of threats. And thanks to its transparent chain-of-thought, it can provide a rationale: e.g., “I suspect these three alerts are related to the XYZ malware because they exhibit A, B, and C patterns that were described in yesterday’s threat intel report,” with references. This builds trust in its analysis. A cybersecurity company called NewPush leveraged Google’s AI in Workspace for tasks like this – automating threat research and report drafting – and saved their analysts 12 hours a week. That’s 12 hours they can now spend on proactive defense rather than rote work. Similar benefits would accrue to any enterprise SOC (Security Operations Center) that deploys Gemini: their highly skilled (and often overworked) analysts can offload log parsing and first-draft report writing to the AI, focusing instead on decision-making and incident response. Microsoft’s Security Copilot (which uses OpenAI’s GPT-4) is an example of such an AI assistant tuned for security, helping summarize incidents, suggest remediation steps, and answer questions about threats. Gemini 2.5 Pro can play a comparable role – and potentially excel due to its reasoning clarity. It can present its analysis in a step-by-step format (“Step 1: I checked endpoint logs… Step 2: I compared with threat database… Step 3: This sequence matches a known pattern of attack X”), allowing the security team to follow along and trust the conclusions.

Data Privacy and Protection: When it comes to the AI’s operation, Gemini 2.5 Pro is designed to uphold strict data privacy. As mentioned earlier, any data an enterprise inputs into Gemini (through Vertex AI or Workspace integrations) is not used to retrain Google’s models. This one-way use of data (only to generate output for the user, not to improve the model for others) prevents sensitive corporate or government information from leaking into the AI’s global knowledge. OpenAI’s ChatGPT Enterprise and Anthropic’s Claude Enterprise follow the same principle – it’s becoming a standard for enterprise AI offerings. Additionally, encryption and tenant isolation ensure that one organization’s data and prompts are logically separated from another’s, and even within an organization, as with Microsoft Copilot, existing permission structures ensure users only get answers from data they are allowed to see. In the context of Gemini, if used via Google Workspace (Duet AI), it similarly does not reveal info a user isn’t permitted to access. For instance, if an employee asks Gemini a question about “Project Alpha” but they don’t have access to Project Alpha documents, the model will not pull that info (assuming integration with Google Drive/Docs with permissions). This prevents accidental data leaks across departments and maintains internal security boundaries.

Gemini 2.5 Pro also likely inherits Google’s strong DLP (Data Loss Prevention) features. Enterprises can set up rules such as “do not allow the AI to output any string that looks like a credit card number or social security number.” If someone tried to prompt Gemini to reveal such info (whether from memory or from provided content), the system can filter or mask it, preventing sensitive data from appearing on screen. These kinds of guardrails are essential in sectors like healthcare (to avoid disclosing patient identifiers inappropriately) or finance (to protect personal financial data).

Robustness Against Prompt Attacks: A security professional will also be concerned with how robust the model is against manipulation – e.g., prompt injection attacks where a user might try to get the model to ignore its instructions or reveal system prompts. Google, OpenAI, and others have been working to harden models against such exploits. With Gemini’s training focused on chain-of-thought and reasoning, it might actually be better at understanding when a prompt is trying to subvert it. The model can be trained to recognize instructions like “ignore previous directives” as malicious and refuse. Microsoft’s Copilot explicitly filters out attempts to get at the system prompt or to produce disallowed content. We can assume Google has done similar with Gemini – especially because as an enterprise tool, any such leakage or misbehavior could be a showstopper for adoption. The reinforcement learning phase of Gemini’s training very likely included numerous scenarios of “bad user prompts” to teach it to comply with policies. In practice, this means security teams can trust that Gemini will stay within the bounds set by the organization – it will follow the ethical and security guidelines imparted to it and is difficult for an end-user to trick into doing otherwise.

Auditability and Compliance: Enterprises often need to demonstrate compliance with regulations or internal policies, and Gemini 2.5 Pro can assist here as well. It can maintain logs of its outputs and reasoning that compliance officers could review. Also, consider industries like finance that require retention of communications – if employees are using Gemini to draft emails or advice, those outputs can be archived just like any communication. Microsoft’s approach with Copilot is to integrate with compliance tooling (they allow retention policies to be applied to Copilot interactions, via Microsoft Purview). Google will likely ensure that interactions with Gemini can be captured by Google Vault or equivalent, if needed, for e-discovery or compliance audits. This means using the AI does not create a blind spot in a company’s record-keeping.

Security Benchmarking and Evaluation: It’s worth noting that Gemini 2.5 Pro has also been evaluated on benchmarks relevant to security and factual reliability. For instance, its performance on the SimpleQA factuality test was solid (around 53% vs some competitor at 62%), indicating it tries to base answers on verifiable info. In an enterprise setting, factual accuracy is a part of security – a hallucinated answer could be seen as a breach of integrity. Google’s emphasis on high-quality training data and the chain-of-thought approach is aimed at reducing hallucinations and making the model more truthful and reliable. Early testers have noted Gemini’s remarkable awareness of its own limitations – when asked about the limitations of large language models, Gemini 2.5 Pro provided a nuanced and accurate self-assessment. This kind of self-reflection is a sign that the model might be less prone to overstep or provide false information confidently. For security pros, an AI that knows to say “I’m not sure” or “I don’t have access to that information” is far safer than one that makes something up.

Comparison to Microsoft Copilot (Security Copilot): Microsoft’s Security Copilot, introduced in early 2023, is basically an application of GPT-4 specifically fine-tuned and integrated for security scenarios. It can summarize incidents from Microsoft Sentinel (their SIEM), correlate vulnerabilities, and provide guidance on mitigation. Gemini 2.5 Pro, while not a pre-packaged “Security Copilot” product, provides the core engine that could power similar capabilities on Google’s security platform or third-party platforms. In fact, we may see Google integrate Gemini into its Chronicle security analytics (Google’s cloud-native SIEM) or into Workspace security center for things like analyzing DLP incidents. One advantage Gemini could have is its ability to incorporate images or screenshots in an analysis – for example, an analyst could give it a screenshot of an error or suspicious email and Gemini can factor that in. Also, Gemini’s huge context means it can take in all relevant data in one go: Microsoft’s solution might have to chunk data because of context limits, whereas Gemini could, say, consume a full day’s network traffic log of millions of lines in a single query if needed.

Enhancing Security Team Skills: Finally, Gemini serves as a training tool for security teams. It can function as a mentor that explains complex concepts in simple terms. A junior security analyst could ask Gemini to explain what a particular malware does or what a CVE (Common Vulnerabilities and Exposures) report means in layman’s terms. By educating team members on demand, it raises the overall security knowledge in an organization. This is especially useful given the shortage of skilled cybersecurity professionals – Gemini can help bridge the gap by making each analyst more effective and knowledgeable, as needed.

In conclusion, Gemini 2.5 Pro enhances enterprise security on two fronts: it helps security teams work smarter and faster, and it adheres to rigorous security and privacy standards itself. Its transparent reasoning fosters trust – a crucial factor when an AI is assisting in high-stakes decisions like cyber incident response. The strong data privacy stance and compliance features mean organizations can deploy it without jeopardizing sensitive information. When compared to other offerings like Microsoft’s Copilot or OpenAI’s solutions, Gemini stands out with its combination of reasoning clarity and integration into Google’s secure ecosystem. For security professionals, this means they can harness a powerful new ally that amplifies their capabilities while still operating within the guardrails that protect the enterprise.

Conclusion

Gemini 2.5 Pro Enterprise AI represents a significant leap forward in the enterprise AI landscape, combining cutting-edge model architecture with the practical features organizations need for productivity and security. Through this detailed exploration, we have seen how Gemini 2.5 Pro’s unique “thinking” architecture – with native chain-of-thought reasoning, multimodal capabilities, and a massive context window – enables it to tackle complex tasks and provide transparent, trustworthy outputs. These strengths directly translate into real-world benefits: enterprises across finance, healthcare, manufacturing, and government are witnessing notable productivity gains, from double-digit efficiency boosts to order-of-magnitude reductions in process times, when they leverage generative AI solutions akin to Gemini. Equally important, Gemini 2.5 Pro is built with enterprise-grade security in mind, ensuring that sensitive data remains protected (no training on customer data), compliance standards are met, and AI outputs can be audited and controlled to align with organizational policies.

In comparing Gemini 2.5 Pro to its peers – Microsoft Copilot, Anthropic Claude 3, and OpenAI GPT-4 – it’s evident that we are entering a era of robust competition and specialization in enterprise AI. Each solution has its forte, but Gemini 2.5 Pro particularly stands out for its balanced excellence: it not only matches or exceeds state-of-the-art performance on reasoning, coding, and knowledge benchmarks, but does so while offering clarity (explainable steps) and breadth (images, code, text all-in-one). For enterprise IT leaders, this means Gemini can serve as a powerful general-purpose AI brain embedded in their operations – whether as a developer assistant in code repositories, a decision supporter analyzing business data, or a content generator aiding marketing and HR teams.

From a security professional’s perspective, Gemini 2.5 Pro demonstrates that enhancing productivity with AI does not have to come at the expense of security. On the contrary, we discussed how Gemini can augment security operations themselves, and how its design and Google’s deployment model ensure strong privacy safeguards and compliance. This alignment of security and productivity is crucial for adoption: it lowers barriers for highly regulated industries (like finance and healthcare) to embrace AI and unlock its value. When an AI system can save an analyst or a caseworker 25 hours a week while maintaining strict confidentiality, the risk-benefit calculus starts to look very favorable.

It is also worth looking ahead. Gemini 2.5 Pro is labelled “Pro Experimental” at this stage, indicating that Google will continue to refine it (and eventually drop the “experimental” tag). We can anticipate further improvements: perhaps an officially supported 2-million token context in the near future, even tighter integration with Google’s ecosystem (imagine Gemini advising in Google Sheets like Copilot does in Excel), and wider rollout to Vertex AI customers globally. Google’s commitment to baking “thinking capabilities” into all future modelsblog.google suggests that even smaller Gemini versions might inherit some of these advanced features, making AI more accessible across different price/performance tiers for enterprise use.

For general tech readers and enterprise decision-makers, the emergence of Gemini 2.5 Pro and its demonstrated benefits serve as a reminder that the AI revolution in the workplace is accelerating. Just a couple of years ago, the idea of an AI that could read hundreds of pages of regulations and produce a compliant policy draft in minutes, or debug dozens of files of code autonomously, or reduce a two-week audit to an hour, would have seemed far-fetched. Yet here we are in 2025, with enterprises actually piloting and in some cases fully deploying these capabilities. Adopting such technologies thoughtfully – understanding their strengths, setting guidelines for their use, and continuously monitoring outcomes – will be key to harnessing their full potential.

In conclusion, Gemini 2.5 Pro exemplifies how AI can enhance both productivity and security in enterprise settings. It empowers employees to accomplish more in less time, tackle problems once thought too complex for automation, and do so with a safety net of transparency and compliance. Companies that leverage Gemini 2.5 Pro effectively will likely gain a competitive edge, as their workforce is amplified by one of the most advanced AI systems available. Meanwhile, the healthy competition with other AI providers will spur further innovation – benefiting enterprises and users with better tools and lower costs over time. As we move forward, one thing is clear: enterprise AI is no longer just about automating tasks; it’s about creating a collaborative intelligence where human expertise and AI “thinking” work hand-in-hand to drive better outcomes, securely and efficiently. Gemini 2.5 Pro is a bold step in that direction, heralding a new era of intelligent, secure enterprise productivity.

References

- Gemini 2.5 Pro Overview

https://deepmind.google/technologies/gemini - Google Cloud – Vertex AI with Gemini

https://cloud.google.com/vertex-ai/generative-ai/docs/gemini/overview - Introducing Gemini 1.5 with 1 Million Token Context

https://blog.google/technology/ai/google-gemini-15/ - Google AI Studio – Build with Gemini Pro

https://makersuite.google.com - Benchmark Results for Gemini Pro on Chatbot Arena

https://lmsys.org/blog/2024-03-25-arena/ - Claude 3 Release by Anthropic

https://www.anthropic.com/index/claude-3 - OpenAI GPT-4 Technical Overview

https://openai.com/research/gpt-4 - Microsoft Copilot for Microsoft 365

https://www.microsoft.com/en-us/microsoft-365/copilot - Microsoft Security Copilot

https://www.microsoft.com/en-us/security/blog/2023/03/28/introducing-microsoft-security-copilot/ - Hugging Face LLM Leaderboard

https://huggingface.co/spaces/HuggingFaceH4/open_llm_leaderboard - Anthropic Claude Enterprise Features

https://www.anthropic.com/enterprise - Google Duet AI for Workspace

https://workspace.google.com/duet-ai/ - MEDITECH Uses Gemini for Healthcare Productivity

https://cloud.google.com/customers/meditech - Enpal AI Deployment Case Study

https://cloud.google.com/customers/enpal - Pfizer Cybersecurity with AI

https://cloud.google.com/customers/pfizer