Demystifying AI Energy Consumption: Everyday Comparisons That Make It Real

As artificial intelligence (AI) becomes increasingly integrated into our daily lives—from voice assistants and personalized recommendations to generative tools that write essays or produce artwork—the resources that power these intelligent systems remain largely invisible to most users. While discussions surrounding AI often focus on accuracy, speed, bias, or ethics, one critical factor is frequently overlooked: energy consumption.

AI systems, particularly those driven by large-scale machine learning models, consume vast amounts of electricity during both their training and inference phases. Training a cutting-edge language model such as GPT-4, for instance, can require thousands of graphics processing units (GPUs) operating continuously for weeks or months. Even after training, delivering AI-generated responses to users—known as inference—demands a global network of data centers working around the clock. These processes, though seemingly intangible, have a real-world energy footprint, contributing to greenhouse gas emissions and placing additional stress on power infrastructure.

The growing reliance on AI at both individual and industrial levels raises urgent questions about sustainability. How much energy does an AI query actually use? Is it comparable to charging a phone or powering a home appliance? Are AI services disproportionately contributing to global electricity consumption, and if so, what can be done to mitigate their impact? Addressing these questions requires translating technical metrics—teraflops, kilowatt-hours, carbon intensity—into relatable, everyday terms that resonate with the general public.

This blog post aims to bridge that understanding gap by examining AI energy consumption through the lens of everyday activities. Drawing from industry reports, academic research, and expert projections, we will provide a comprehensive analysis of how AI systems consume energy and what that means in the context of our broader energy landscape. With relatable analogies, side-by-side comparisons, and visual illustrations, readers will gain a clearer picture of how much power it takes to run AI—and what’s at stake if we ignore this issue.

In doing so, we will also explore potential pathways to reduce AI’s energy footprint, including technological innovations, policy frameworks, and user-level choices. Whether you are an enterprise leader deploying AI models at scale or an individual user experimenting with AI chatbots, understanding the hidden energy costs behind each prompt is essential to fostering a more responsible digital future.

How AI Uses Energy

Artificial Intelligence, particularly in the form of machine learning and deep learning models, relies on computationally intensive processes that consume significant amounts of energy. To understand the energy dynamics of AI systems, it is necessary to distinguish between two primary operational phases: training and inference. Each phase involves different types of workloads, hardware requirements, and durations, which together determine the total energy footprint of an AI model.

AI Training: An Energy-Intensive Endeavor

The training phase of an AI model involves feeding vast amounts of data through complex neural network architectures in order to optimize billions—sometimes trillions—of parameters. This process is computationally intensive, often requiring thousands of high-performance GPUs or specialized accelerators like Google’s Tensor Processing Units (TPUs) operating in parallel across multiple data centers.

Training a large language model (LLM) such as OpenAI’s GPT-3, which contains 175 billion parameters, reportedly consumed approximately 1,287 megawatt-hours (MWh) of electricity. To put this into perspective, that is roughly equivalent to the annual electricity consumption of 120 average U.S. homes. GPT-4, believed to be significantly larger, likely consumed even more energy during its training phase, although exact figures remain undisclosed. These computations may span several weeks or even months, with GPUs operating at near-maximum capacity for extended durations.

The energy cost of training does not solely include the compute cycles; it also includes auxiliary systems such as cooling mechanisms, data storage, and redundancy safeguards. Modern data centers are equipped with advanced liquid or air-based cooling systems to maintain optimal temperatures for hardware. These systems can add anywhere from 10% to 40% overhead to the total energy consumption, depending on the facility’s power usage effectiveness (PUE).

Furthermore, AI training is not a one-time event. Model updates, retraining on newer data, and fine-tuning for specific tasks are all additional training iterations that contribute to cumulative energy consumption. For example, models deployed in rapidly evolving domains such as finance or medicine require continual retraining to remain accurate and relevant.

Inference: The Invisible Daily Energy Drain

While training captures public attention due to its scale and intensity, the inference phase—when a trained model is deployed and used to generate predictions or responses—is equally, if not more, significant in terms of overall energy impact. Inference workloads occur every time a user interacts with an AI system: asking a chatbot a question, translating a sentence, identifying objects in an image, or running a recommendation algorithm.

Though an individual inference request consumes a fraction of the energy required to train a model, the sheer scale of inference across billions of users makes it a substantial and growing contributor to AI-related energy demand. For example, it is estimated that ChatGPT processes over 100 million prompts per day. Even if each prompt consumes only a few watt-seconds of energy, the aggregate impact is massive.

Energy requirements for inference vary depending on the model size, the type of hardware used, and system efficiency. Smaller models or distilled versions of large models can deliver faster, less energy-intensive responses. However, deploying powerful models such as GPT-4 or Meta’s LLaMA 2 at scale involves cloud infrastructure capable of sustaining high-throughput, low-latency operations around the clock.

A 2022 study by the University of Massachusetts Amherst estimated that serving a single AI-generated sentence from a large model consumes about as much energy as turning on a 60-watt light bulb for several seconds. When extrapolated to billions of sentences per day, the implications become substantial. In aggregate, AI inference could soon rival or exceed the energy demands of training, especially as more applications transition from traditional software to AI-enhanced platforms.

Hardware and Infrastructure Dependencies

The energy efficiency of AI systems is closely linked to the underlying hardware and infrastructure. Traditional central processing units (CPUs) are ill-suited for AI workloads, which has led to the widespread adoption of GPUs, TPUs, and increasingly, custom accelerators designed specifically for machine learning tasks. These specialized chips are optimized for parallel computation and can significantly reduce the energy-per-operation for AI tasks.

However, the deployment of these chips occurs within massive hyperscale data centers, which themselves are resource-intensive to build and operate. Facilities such as Microsoft’s Azure, Google Cloud, and Amazon Web Services are strategically located near abundant electricity sources and often negotiate favorable energy contracts to accommodate their high and continuous power demands.

Moreover, the efficiency of a data center is often measured by its Power Usage Effectiveness (PUE) ratio—a metric that indicates how much energy is used by computing equipment versus total facility energy. The closer the PUE is to 1.0, the more efficient the data center. While modern cloud facilities achieve impressive PUE scores (e.g., 1.1–1.2), the growing volume of AI workloads means that even marginal inefficiencies can translate into significant energy waste on a global scale.

A Compounding Challenge

A critical challenge with AI energy consumption is that both training and inference scale with demand. As organizations seek to adopt AI across more functions, the number of models, instances, and user queries continues to rise. Unlike traditional software, where energy costs remain relatively stable after deployment, AI systems exhibit dynamic and compounding energy profiles.

For instance, a single AI-powered customer support chatbot might serve millions of users concurrently, generating thousands of inferences per second. In industries such as healthcare, finance, and manufacturing, AI models are being deployed to make real-time decisions—each involving inference operations that must be executed rapidly and reliably.

Thus, the energy footprint of AI is not confined to sporadic high-intensity training events, but rather extends into a persistent and growing operational phase that demands continuous power.

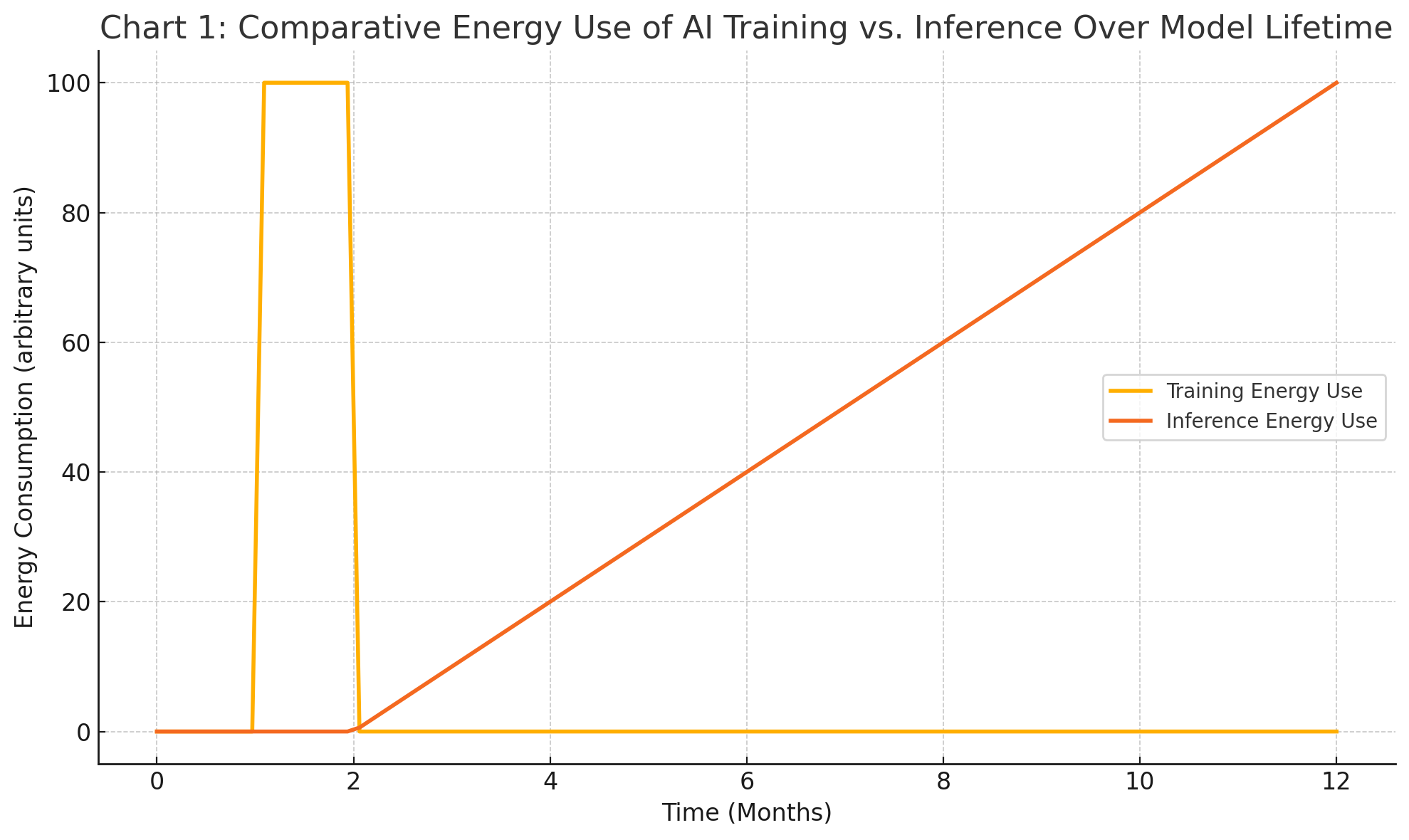

Comparing Training vs. Inference Energy Use

To illustrate the dichotomy between training and inference, consider the following conceptual chart:

This visualization underscores a vital insight: while training is often the most energy-intensive phase in the short term, inference can overtake it in the long term due to persistent and distributed usage patterns.

Everyday Comparisons: Making AI Energy Use Tangible

Understanding the energy consumption of artificial intelligence systems can be challenging due to the highly technical nature of the metrics involved. Measurements such as teraflops, petaflop-days, or kilowatt-hours (kWh) may be meaningful to engineers and data scientists but are largely abstract for the average individual. To bridge this comprehension gap, it is beneficial to contextualize AI energy usage in terms of everyday human activities and common household energy benchmarks. These analogies serve to translate complex data into relatable comparisons that highlight the real-world impact of AI on energy infrastructure and environmental sustainability.

Translating Energy into Familiar Terms

Energy consumption is typically measured in kilowatt-hours, which represents the amount of energy used by a 1,000-watt appliance running for one hour. This metric provides a convenient basis for comparing AI workloads to everyday activities. For example, a standard electric oven operating at 2,000 watts consumes 2 kilowatt-hours of energy in a single hour. Similarly, charging a modern smartphone typically consumes around 0.01 kilowatt-hours.

By converting AI-related energy data into these accessible equivalents, the scale of AI’s energy demands becomes more tangible. For instance, the energy required to generate a single response from a large language model like GPT-4 is estimated to range between 0.0005 and 0.01 kWh, depending on model complexity, server efficiency, and response length. While this figure appears small in isolation, its magnitude becomes clearer when multiplied by the number of daily users and interactions.

Everyday Analogy 1: AI Prompt vs. Boiling Water

A useful comparison is the energy required to boil a cup of water using an electric kettle. On average, boiling 250 milliliters of water takes approximately 0.05 kWh. In contrast, generating a response from an advanced language model may use around 0.005 kWh, which equates to 10% of the energy needed to boil that cup of water. This seemingly minor consumption takes on greater significance when scaled to millions of queries per day.

For instance, if ChatGPT processes 100 million queries daily, and each consumes 0.005 kWh, the total energy usage amounts to 500,000 kWh per day. This is equivalent to the energy needed to boil 10 million cups of water every single day—an extraordinary figure for a service that many perceive as nearly instantaneous and costless.

Everyday Analogy 2: Training a Model vs. Cross-Continental Flights

Training a large AI model such as GPT-3 or GPT-4 is an order of magnitude more energy-intensive than individual inference tasks. As mentioned earlier, training GPT-3 is estimated to have used over 1,287 megawatt-hours of electricity. A commercial Boeing 737-800 jet consumes approximately 2.5 megawatt-hours of fuel during a transatlantic flight from New York to London.

This means that training GPT-3 used roughly the same amount of energy as 515 transatlantic flights. Another way to frame this comparison is that the training of a single large language model could, in energy terms, power one commercial aircraft flying across the Atlantic every day for over a year and a half.

These comparisons illuminate the scale of investment—both financial and environmental—required to bring advanced AI systems to life. The perception that artificial intelligence operates in a purely digital realm often obscures the physical realities of massive computational power, server farms, and energy infrastructure operating behind the scenes.

Everyday Analogy 3: Driving a Car vs. Using AI

Another relatable way to contextualize AI energy consumption is through transportation. According to the U.S. Environmental Protection Agency (EPA), the average gas-powered vehicle emits approximately 404 grams of carbon dioxide per mile driven. Given that producing 1 kWh of electricity in the U.S. emits, on average, about 0.4 kg (or 400 grams) of CO₂, using 1 kWh to run AI tasks is environmentally comparable to driving a gasoline car for one mile.

Therefore, if generating a response from an AI assistant consumes 0.005 kWh of electricity, it equates to driving roughly 66 meters in a conventional car. While that might seem trivial on an individual basis, this energy consumption quickly accumulates at the macro level. Just 1 million queries from an AI system could be equivalent to driving 66,000 kilometers—or 41,000 miles—collectively.

Such analogies are crucial in making visible the environmental cost of digital actions that may otherwise seem negligible.

Regional and Household Contexts

It is also informative to relate AI energy use to average household consumption in different countries. For example, the average American home uses about 10,632 kWh annually, whereas the average home in the United Kingdom uses approximately 3,600 kWh. Using these benchmarks, training GPT-3 consumed the equivalent of what 121 U.S. homes or 357 U.K. homes use in an entire year.

From a regional perspective, the energy grid’s carbon intensity also matters. An AI system running on renewable energy in Norway or Iceland has a significantly smaller environmental impact than the same system running on coal-powered electricity in parts of India or China. This adds a layer of complexity to any universal claims about AI’s carbon footprint, as energy source and geographic location play pivotal roles.

Energy Use of AI vs. Other Digital Services

To understand AI’s place in the broader digital ecosystem, consider that streaming one hour of high-definition video on Netflix consumes approximately 0.3 kWh. In comparison, generating a few dozen AI responses consumes a similar amount of energy, depending on the model. This means that using an AI chatbot for 30–50 interactions might be energetically equivalent to watching an episode of a TV show.

These comparisons are particularly relevant for enterprises and institutions deploying AI services at scale. A corporation serving millions of AI-driven interactions per day may, over time, rival the energy footprint of video streaming platforms or online gaming networks.

Human vs. Machine Thinking: An Energy Paradox

Interestingly, the energy cost of human cognition is remarkably low by comparison. The human brain uses approximately 20 watts of power—equivalent to a light bulb—and yet it can perform complex tasks, learn from experience, and generate creative ideas. AI systems, by contrast, require thousands of watts just to perform relatively narrow tasks. While machines may excel in speed and scale, they do so at a dramatically higher energy cost.

This discrepancy underscores the importance of considering not only performance and scalability in AI development but also energy efficiency and sustainability.

Bringing It All Together

The purpose of these analogies is not to discourage the use of AI, but rather to cultivate awareness about its hidden energy costs. Understanding that an AI response might carry the same energy cost as boiling a cup of water, or that training a model can rival the emissions of hundreds of transatlantic flights, is vital to promoting responsible usage patterns and driving innovation toward more energy-efficient AI systems.

As public discourse around climate change and digital sustainability intensifies, these comparisons can empower consumers, developers, and policymakers to make more informed decisions. For enterprises, this may mean investing in energy-efficient infrastructure or selecting cloud providers that prioritize renewable energy. For individuals, it may encourage more mindful usage of AI tools, particularly when the marginal utility of an interaction is low.

The Scale Problem – AI vs. Global Energy Use

As artificial intelligence becomes increasingly embedded in consumer, enterprise, and governmental operations, its energy consumption is scaling at a pace that raises concerns about long-term sustainability. While isolated AI tasks may seem negligible in terms of electricity usage, the aggregate effect of millions—or even billions—of such operations per day is substantial. Understanding the systemic impact of AI energy consumption requires examining not just per-task metrics, but also the broader implications at the global scale and in comparison with other energy-intensive industries.

The Exponential Growth of AI Workloads

The proliferation of AI-driven services across sectors such as healthcare, finance, education, retail, and manufacturing has led to a dramatic increase in the volume and frequency of AI workloads. According to recent estimates from industry analysts, AI workloads in data centers could consume up to 4% of global electricity by 2030 if current growth trends continue. This projection is driven largely by two factors:

- Model Complexity: The number of parameters in cutting-edge AI models continues to grow exponentially. For example, GPT-2 had 1.5 billion parameters, GPT-3 reached 175 billion, and estimates for GPT-4 and beyond exceed 500 billion.

- Inference Volume: As AI services scale to serve billions of users, the cumulative energy required for inference grows in a near-linear fashion, with usage patterns driving energy consumption around the clock.

Large technology companies are deploying thousands of AI chips across geographically distributed data centers to meet demand. These facilities not only require significant power for computation but also for ancillary systems such as networking, redundancy, and cooling.

Global Energy Demand and AI’s Emerging Share

To contextualize AI’s potential share of global electricity use, it is helpful to consider current global consumption. As of 2024, the world consumes approximately 28,000 terawatt-hours (TWh) of electricity annually. Data centers alone are estimated to consume about 3% of global electricity, or roughly 840 TWh per year. Within that category, AI-specific workloads are currently responsible for an estimated 1.5% to 2%—a figure that is growing rapidly with the widespread adoption of generative AI models.

Research from the International Energy Agency (IEA) suggests that without improvements in efficiency and sustainable infrastructure, AI workloads could surpass 1,000 TWh annually by 2030. For comparison, that is more than the total electricity consumption of Japan, the world’s third-largest economy.

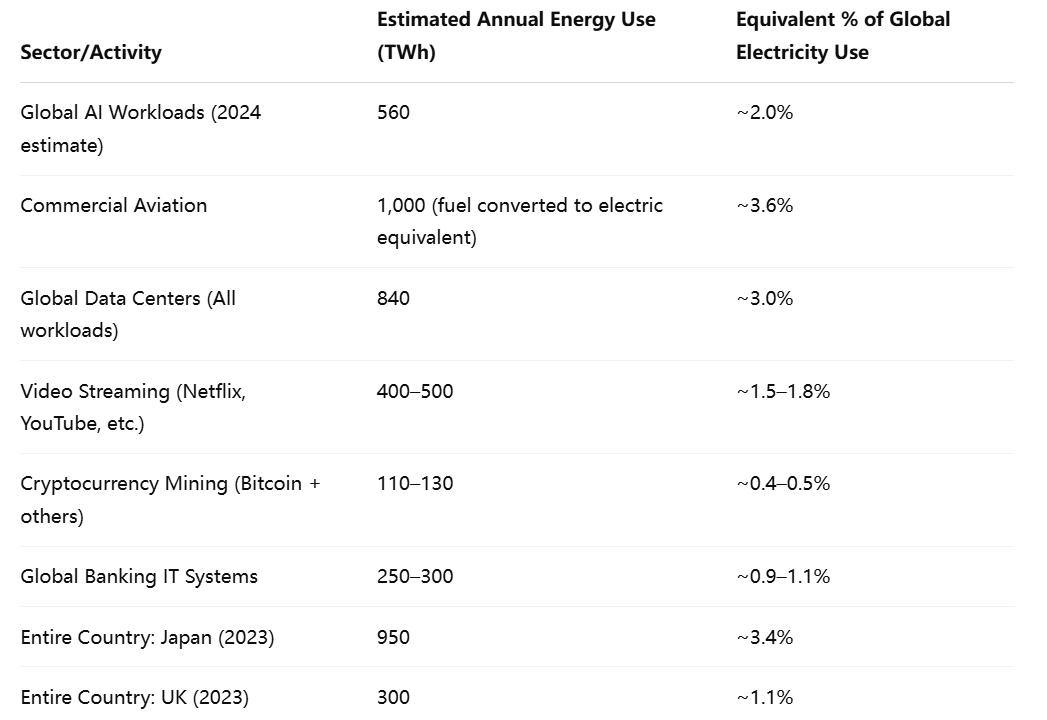

Comparing Energy Use Across Sectors

To provide a clearer sense of proportion, the following table compares the estimated annual electricity consumption of AI-related workloads with other prominent industries:

This table reveals that AI already consumes more energy than entire national infrastructures (e.g., the UK) and could soon rival sectors traditionally seen as energy-intensive, such as aviation and video streaming. This trajectory makes AI a central topic in discussions of global energy strategy and sustainability.

Infrastructure Strain and Environmental Costs

The concentration of AI infrastructure in certain regions—such as Northern Virginia in the United States, parts of Ireland, and Singapore—also raises questions about regional energy strain. In some areas, hyperscale data centers account for 10–20% of local electricity demand, straining power grids and complicating renewable integration strategies.

Moreover, the environmental cost of powering these data centers depends heavily on the local energy mix. In regions reliant on coal or natural gas, the carbon intensity of AI operations is significantly higher than in regions powered by hydro, wind, or solar energy. This variability means the global carbon footprint of AI is not only growing, but also unevenly distributed, potentially exacerbating inequalities in energy burden and environmental degradation.

The Inference Bottleneck

While the training phase of AI models captures headlines due to its upfront energy demands, the inference phase represents the most persistent and scaling challenge. Once a model is deployed, it continues to consume energy as users interact with it—often in unpredictable or bursty patterns.

For example, a large model might require 0.01 kWh per prompt, which seems trivial. But at a scale of 1 billion daily prompts, this equates to 10 million kWh per day, or 3.65 TWh annually—comparable to the electricity consumption of a small country like Luxembourg. As more businesses embed AI features into products and services, and as users grow accustomed to real-time AI interaction, the inference load is poised to increase significantly.

This “inference bottleneck” is compounded by the fact that maintaining high availability and low latency often necessitates running AI infrastructure continuously, even during periods of low demand.

A Tipping Point in Policy and Awareness

The convergence of AI growth with mounting climate concerns has prompted governments and regulatory bodies to begin scrutinizing the energy profile of digital infrastructure more closely. In the European Union, recent sustainability frameworks have called for greater transparency from data centers regarding energy use and emissions. In the United States, the Department of Energy has initiated research into AI optimization for energy efficiency in national labs and public sector AI use.

Industry players are also beginning to self-regulate by publishing sustainability reports and experimenting with novel methods of energy sourcing and cooling—such as underwater data centers, direct air capture offsets, and AI-assisted power management.

Nonetheless, a consistent and standardized methodology for measuring AI energy impact remains elusive, making it difficult to assess progress across companies or geographies.

Who Bears the Burden? The Energy Behind Your Prompt

Although the end-user experience of artificial intelligence systems often appears seamless, instantaneous, and virtually cost-free, the reality behind the scenes is far more complex. Each AI-generated response is the result of a multi-layered infrastructure, spanning thousands of miles and consuming a significant volume of resources—both digital and physical. The burden of powering these systems is distributed unevenly across cloud providers, geographic regions, and infrastructure operators, raising important questions about responsibility, transparency, and sustainability.

Cloud Providers: The Engines of AI

The overwhelming majority of AI workloads today are hosted and operated by a handful of hyperscale cloud providers. Companies such as Microsoft (Azure), Amazon (AWS), Google (Cloud), and Meta invest billions of dollars annually in constructing and operating data centers optimized for artificial intelligence. OpenAI, Anthropic, and other leading AI research firms typically rely on these platforms to scale their models globally.

For example, Microsoft has entered into a multi-billion-dollar partnership with OpenAI, deploying tens of thousands of GPUs to power models such as GPT-4 across its Azure infrastructure. Similarly, Google has tailored its cloud platform with custom-designed Tensor Processing Units (TPUs) to accelerate training and inference workloads.

These providers incur not only the direct energy costs of computation but also the operational overhead associated with maintaining servers, managing heat dissipation, and ensuring network uptime. Data centers must adhere to stringent standards for latency, redundancy, and fault tolerance, often leading to energy use that far exceeds raw compute requirements alone.

The scale of these operations is vast. A single hyperscale data center can consume between 20 and 50 megawatts of power—enough to supply tens of thousands of homes. As AI adoption intensifies, cloud providers are under increasing scrutiny to manage the environmental implications of their growing footprint.

The Geographic Disparity in Energy Burden

The location of AI infrastructure significantly influences its environmental impact and energy efficiency. Regions with cooler climates and abundant renewable energy—such as Scandinavia, parts of Canada, and the Pacific Northwest in the United States—are favored for AI data center deployment due to their ability to minimize cooling costs and leverage clean electricity.

Conversely, in regions where electricity is generated primarily from fossil fuels, the carbon intensity of AI operations is much higher. For instance, running an AI model in Poland, where coal remains a major energy source, can emit up to four times as much CO₂ per kilowatt-hour as running the same model in Norway, where hydroelectric power predominates.

This disparity underscores a hidden inequity: while AI services are consumed globally, the environmental costs are often localized to regions hosting the computational infrastructure. In some countries, data centers have sparked controversy due to their disproportionate strain on local power grids, water supplies for cooling, and land usage.

The Cost of Free: User Perception vs. Provider Burden

From a user’s perspective, interacting with an AI assistant, content generator, or recommendation engine typically appears to be "free"—requiring only a device and an internet connection. However, this perception belies the actual cost structure that underpins the delivery of each AI response.

Every prompt submitted to a large language model triggers a cascade of processes: routing through network switches, activation of GPU clusters, memory allocation, and power draw from redundant systems. These operations cumulatively incur costs in electricity, hardware depreciation, cooling, and maintenance.

Estimates suggest that generating a single complex AI response could cost a cloud provider between $0.002 and $0.01 in electricity alone, depending on the model and infrastructure. At scale, this translates into millions of dollars per month in operational expenses, particularly for widely used models like GPT-4 or Claude. When infrastructure is over-provisioned to handle peak demand, much of the power drawn is expended maintaining idle readiness—contributing further to systemic inefficiencies.

The result is a financial and environmental burden that is obscured from the end user but keenly felt by infrastructure operators. As more consumer services integrate AI capabilities—email drafting, image generation, translation—the hidden cost of what appears to be "free" functionality becomes a significant sustainability challenge.

Carbon Offsets and the Green Cloud Narrative

In response to mounting concerns, major cloud providers have embraced sustainability pledges, often committing to carbon neutrality or even carbon negativity. Microsoft, for instance, aims to be carbon negative by 2030, while Google claims its data centers are already carbon neutral through the use of renewable energy and offsets.

However, the effectiveness of these measures is contested. Carbon offsets, especially those involving tree planting or future carbon capture, are criticized for their delayed and often unverifiable impact. Moreover, purchasing renewable energy certificates (RECs) does not guarantee that clean energy is used at the time and place where it is needed, especially when AI workloads operate on demand and across time zones.

Another complexity lies in the lifecycle carbon footprint of AI hardware. Manufacturing high-performance GPUs, TPUs, and networking components requires the mining and processing of rare earth metals, which are associated with significant upstream emissions. These embodied emissions are rarely accounted for in providers' sustainability reports but are an integral part of AI’s total environmental cost.

The Role of Regulation and Transparency

As awareness of AI’s environmental footprint grows, policymakers and regulators are beginning to demand more transparent reporting and accountability. The European Union’s Data Act and upcoming AI Act both include provisions that could require providers to disclose energy usage, carbon intensity, and environmental impact metrics for high-risk AI systems.

Some jurisdictions, including Ireland and Singapore, are exploring energy quotas and sustainability certifications as prerequisites for approving new data center construction. These measures could influence where and how AI services are deployed in the coming years.

Transparency is also emerging as a market differentiator. Providers that can credibly demonstrate lower carbon intensity or offer “green AI zones” may attract enterprise clients with environmental, social, and governance (ESG) mandates. In the long term, sustainability metrics could become as central to AI procurement decisions as performance benchmarks or pricing.

Solutions and Innovations to Curb AI’s Energy Appetite

As artificial intelligence continues to evolve into a foundational technology across industries, the pressing issue of its energy consumption has garnered significant attention from researchers, technologists, and policymakers alike. While the magnitude of AI’s energy demands is a cause for concern, it has also spurred a wave of innovation aimed at reducing its environmental footprint. These solutions range from advances in hardware and algorithm design to intelligent workload management and novel system architectures. This section explores key developments and emerging practices that are helping to mitigate the energy cost of AI systems while preserving their utility and scalability.

Advancements in AI Hardware Efficiency

One of the most direct ways to reduce the energy consumption of AI systems is by improving the efficiency of the hardware on which they operate. Traditional general-purpose processors, such as central processing units (CPUs), are not optimized for the parallel processing demands of machine learning workloads. This inefficiency has led to the widespread adoption of specialized accelerators, including graphics processing units (GPUs), tensor processing units (TPUs), and dedicated AI chips.

Recent iterations of these technologies—such as NVIDIA’s H100 GPU, Google’s TPU v5, and Intel’s Gaudi 3 AI processor—offer substantial improvements in energy efficiency. These chips are designed to execute more operations per watt, thereby reducing the total power required to train and serve models. Additionally, many of these processors incorporate features such as dynamic voltage scaling and thermal management, which adapt power consumption based on workload intensity.

Moreover, hardware vendors are increasingly focusing on system-level energy optimization. This includes integrating memory hierarchies more tightly with compute cores, minimizing data movement (a major contributor to energy use), and designing interconnects that facilitate high-throughput, low-latency communication across processors.

Algorithmic Optimization and Model Compression

Beyond hardware, there is considerable potential for energy savings through algorithmic innovation. Researchers have developed a variety of techniques to reduce the computational complexity of AI models without significantly compromising accuracy. These include:

- Quantization: Reducing the precision of numerical representations (e.g., from 32-bit floating point to 8-bit integers) to lower memory and compute requirements.

- Pruning: Eliminating redundant or non-contributory weights from neural networks, effectively reducing the model’s size and energy usage.

- Distillation: Creating smaller “student” models that approximate the performance of larger “teacher” models, enabling faster and more efficient inference.

Such methods allow developers to deploy models that are more environmentally sustainable while still meeting performance requirements. Notably, Meta’s deployment of LLaMA 2 models includes distilled and quantized versions designed specifically for energy-conscious environments.

In addition to compression, researchers are exploring sparsity-aware training algorithms that leverage the fact that many neural network weights and activations are zero or near-zero. Exploiting this sparsity during training and inference can lead to significant energy and memory savings.

Architectural Innovations: Mixture of Experts and Beyond

Emerging AI model architectures also offer pathways to improved energy efficiency. One promising approach is the Mixture of Experts (MoE) model, which activates only a small subset of neural network components during inference, depending on the input. Unlike conventional models that use all layers and parameters for every query, MoE architectures dynamically route queries through a minimal set of specialized sub-models.

This selective activation strategy significantly reduces the number of computations required per inference, thereby lowering energy consumption. For instance, Google’s Switch Transformer activates only 1 out of 64 expert layers per query, offering efficiency gains without sacrificing accuracy on key tasks.

Other architectural advances, such as retrieval-augmented generation (RAG), combine traditional information retrieval methods with generative AI to reduce the computational burden of generating responses from scratch. By retrieving relevant content from external sources and using it to condition the AI model’s output, RAG systems can reduce the number of tokens the model must process—resulting in faster and more energy-efficient performance.

Smarter Workload Scheduling and Resource Allocation

Another promising frontier in energy-efficient AI lies in workload management. Instead of operating AI infrastructure at constant full capacity, providers are increasingly using intelligent scheduling systems to align AI workloads with periods of lower grid carbon intensity or higher renewable energy availability.

For instance, Google’s data centers utilize carbon-aware computing to shift non-urgent workloads, such as model training, to times and locations where clean energy is more abundant. By integrating grid carbon intensity forecasts into workload dispatching decisions, such systems can reduce the overall emissions associated with AI computation.

In parallel, workload orchestration tools such as Kubernetes and Apache Airflow are being adapted for AI use cases, enabling dynamic scaling, energy-aware job placement, and resource pooling across clusters. These tools are vital for optimizing compute utilization and avoiding unnecessary over-provisioning, which wastes both electricity and hardware potential.

Data-Efficient AI: Doing More with Less

Reducing the energy consumption of AI also requires rethinking data strategies. Training massive models on internet-scale datasets involves substantial storage and processing costs. However, recent research has demonstrated that data quality can often outweigh quantity in determining model performance.

By curating cleaner, more diverse, and more representative datasets, researchers can train models that converge faster and generalize better—thereby reducing training time and associated energy use. Techniques such as curriculum learning, active learning, and synthetic data generation further contribute to this goal by improving data efficiency.

This shift toward data-efficient AI reflects a broader philosophical transition in machine learning: from brute-force scale to intelligent design.

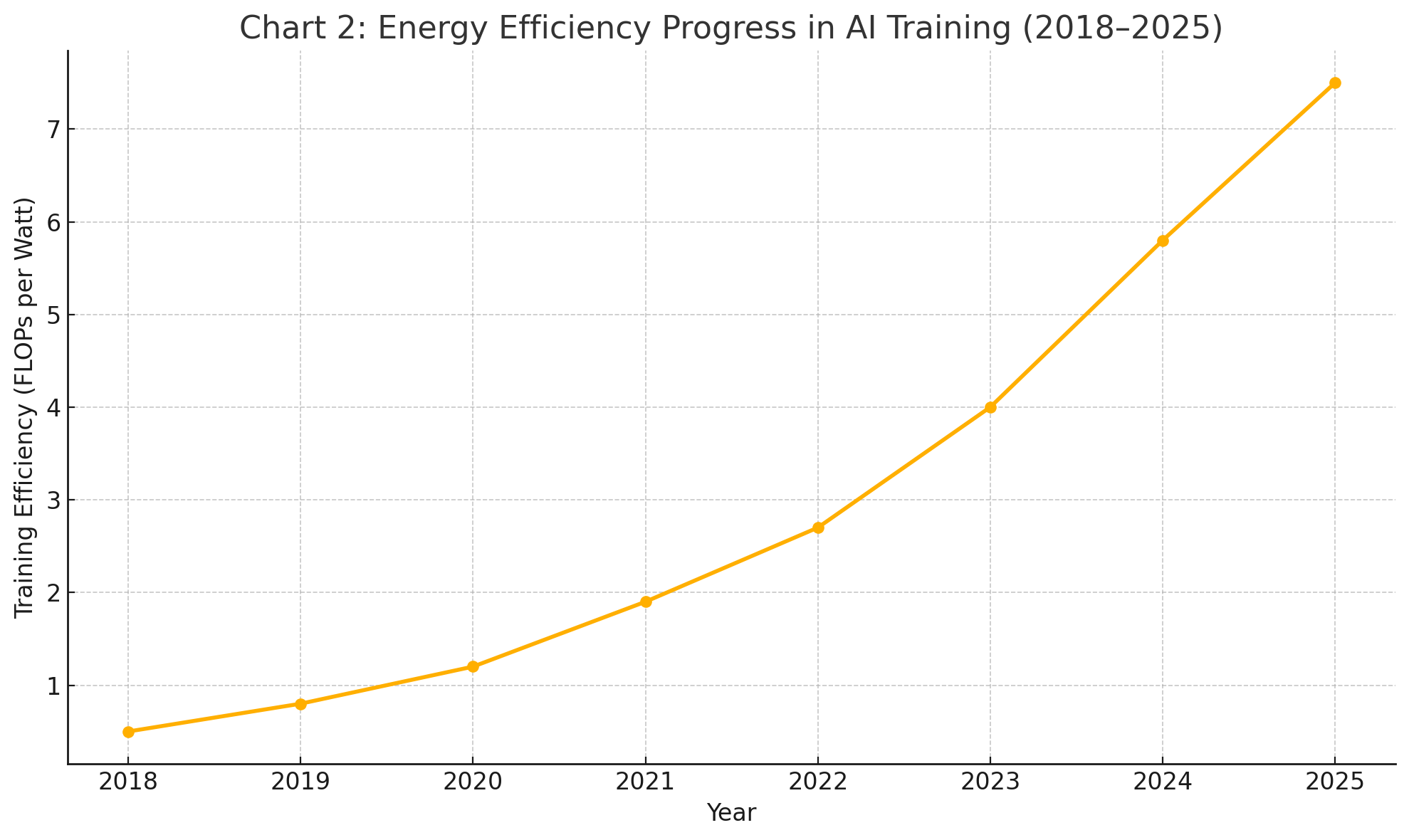

Chart: Energy Efficiency Trends in AI Training (2018–2025)

To illustrate the cumulative effect of hardware and software innovation, consider the following visualization:

This trend, while encouraging, must be interpreted within the context of expanding model sizes and increasing demand. Even as efficiency improves, total energy consumption can rise if model deployment scales unchecked—a paradox known as the "rebound effect."

Towards Responsible AI Energy Use

The rapid ascent of artificial intelligence has ushered in a new era of technological progress, with profound implications for virtually every sector of the global economy. From generative models that compose prose and code to decision-support systems that drive automation in finance, medicine, and logistics, AI is no longer a speculative frontier—it is an operational reality. However, this proliferation comes with a significant, and often underestimated, energy cost.

As this analysis has shown, AI systems demand vast amounts of electricity during both training and inference. While the training of large models like GPT-4 generates sharp spikes in energy use, it is the persistent, distributed burden of inference—repeated billions of times per day across consumer and enterprise applications—that now represents the most substantial and growing component of AI’s energy profile. When aggregated globally, this consumption rivals and, in some instances, surpasses the energy use of entire countries or industrial sectors.

Moreover, the perceived “invisibility” of AI’s energy impact contributes to a gap in public awareness. End users rarely consider that a simple AI-generated sentence might carry the same energy weight as boiling water or driving a short distance in a conventional car. Similarly, businesses integrating AI into customer-facing applications may not fully appreciate the downstream effects of exponential demand on cloud infrastructure, regional power grids, and global carbon emissions.

Encouragingly, the industry is not without agency. Innovations in specialized hardware, model compression, intelligent workload scheduling, and data efficiency offer clear pathways to reduce AI’s energy intensity. The emergence of architectures such as mixture of experts, along with carbon-aware computing frameworks, demonstrates that sustainability and performance need not be mutually exclusive. However, the effectiveness of these measures will depend on widespread adoption, transparent reporting, and continuous investment in both technological and policy innovation.

Looking ahead, a multi-stakeholder approach will be critical. Cloud providers must prioritize renewable integration and lifecycle carbon reporting. Governments should enact regulatory frameworks that incentivize sustainable AI deployment while penalizing environmental externalities. Researchers must continue to pursue efficiency as a core design principle rather than a secondary optimization. And individuals—whether as developers, executives, or everyday users—must cultivate a more conscious relationship with the digital tools they rely upon.

Artificial intelligence holds immense promise, but realizing that promise responsibly requires acknowledging and addressing its hidden energy costs. Only by demystifying these impacts and translating them into everyday terms can we foster a digital ecosystem that is not only intelligent but also environmentally sustainable and socially equitable.

References

- OpenAI Blog – GPT Model Information

https://openai.com/blog/chatgpt - International Energy Agency – Data Centres and Energy

https://www.iea.org/reports/data-centres-and-data-transmission-networks - Microsoft Sustainability Commitments

https://www.microsoft.com/sustainability - Google Cloud – Carbon-Aware Computing

https://cloud.google.com/blog/products/infrastructure/inside-look-at-googles-carbon-aware-data-centers - Meta AI – LLaMA Model Release

https://ai.meta.com/llama/ - NVIDIA – H100 Tensor Core GPU Overview

https://www.nvidia.com/en-us/data-center/h100/ - Google Cloud – TPU v5e Launch

https://cloud.google.com/blog/products/ai-machine-learning/introducing-cloud-tpu-v5e - Anthropic – Claude AI Overview

https://www.anthropic.com/index/claude - Amazon AWS – Sustainable Data Centers

https://sustainability.aboutamazon.com/operations/data-centers - Hugging Face – Efficient Model Training and Deployment

https://huggingface.co/blog/efficient-training