Amazon Nova AI Models: Redefining Enterprise Intelligence with Text, Image, and Multimodal Generative AI

The landscape of artificial intelligence (AI) has experienced rapid and unprecedented evolution in recent years, driven by the development and deployment of large-scale foundation models capable of reasoning, generating content, understanding images, and even interpreting multimodal data streams. While industry pioneers such as OpenAI, Google DeepMind, Anthropic, and Meta have made significant strides in scaling these capabilities, Amazon’s entrance into the foundation model space—through its Nova series—marks a pivotal moment in the trajectory of AI development. With Nova AI models, Amazon signals its intent to compete not just as a cloud infrastructure provider, but as a frontrunner in the race to define the future of general-purpose, multimodal intelligence.

Amazon’s Nova models represent a strategic and technical leap that aligns with the company’s broader ambition of embedding intelligent systems deeply across its cloud, retail, and consumer-facing ecosystems. Built to perform across a spectrum of modalities—including text, images, video, and audio—the Nova AI models are designed with scalability, enterprise readiness, and developer flexibility in mind. Unlike earlier iterations of model families such as Amazon’s Titan, which focused primarily on text-based generation and embedding use cases, Nova embraces the complexity of multimodal understanding. This positions it not only as a generative engine for enterprise applications but also as a foundational layer for the next wave of intelligent systems.

The emergence of Nova AI coincides with a broader industry shift toward multimodal models, which integrate information across various data types to perform more holistic reasoning and interaction. While traditional large language models (LLMs) excel at parsing and generating text, they fall short in tasks that require understanding and synthesis across formats—for example, analyzing a PDF document with embedded charts, generating product descriptions from images, or interpreting a video alongside a corresponding transcript. Nova addresses this gap by enabling unified processing across multiple content types, allowing developers and businesses to build experiences that are significantly richer, more contextual, and more intelligent.

In addition to its multimodal capabilities, Amazon Nova stands out for its architectural innovations, high-performance inference, and seamless integration with the AWS ecosystem. This tight integration provides a competitive edge: enterprises already entrenched in AWS can immediately leverage Nova’s capabilities through managed services like Amazon Bedrock and SageMaker. Furthermore, the availability of fine-tuning, RAG (retrieval-augmented generation), and model monitoring within AWS cloud environments positions Nova as a turnkey solution for businesses seeking both power and control.

At the heart of Nova’s design is an emphasis on flexibility and modularity. The models are optimized for both general-purpose tasks and domain-specific applications, offering customization options to enterprises with highly specialized needs. Amazon has also emphasized performance optimization in low-latency environments, making Nova suitable for edge deployment and real-time inference scenarios, such as e-commerce search refinement, voice assistant augmentation, and fraud detection in financial transactions.

Perhaps most importantly, the Nova models have been introduced at a time when enterprises are grappling with increasing demands for data security, compliance, and operational scalability. With rising scrutiny around the ethical and regulatory implications of AI—especially in sectors like healthcare, finance, and public services—Amazon’s cloud-first, governance-conscious approach offers reassurance to risk-sensitive industries. The availability of features such as audit logging, encrypted data handling, and customizable safety guardrails reflects Amazon’s understanding of the challenges enterprises face when deploying AI at scale.

Beyond the enterprise use case, Nova also represents Amazon’s vision for AI across consumer and device ecosystems. Analysts speculate that future iterations of the Nova model family will integrate directly with Alexa-powered devices, Prime Video content pipelines, and even smart logistics platforms that underpin Amazon’s supply chain. In this way, Nova could serve as a general intelligence engine across the company’s vast range of touchpoints—from cloud services and streaming content to e-commerce personalization and IoT infrastructure.

The purpose of this article is to provide a comprehensive analysis of the Nova AI model suite, with a particular focus on its multimodal architecture, real-world use cases, integration pathways, and strategic implications. We will also compare Nova’s capabilities with those of other leading models on the market, assess its suitability for various industry verticals, and explore Amazon’s broader ambitions in the generative AI ecosystem.

To that end, this blog post will be structured into the following sections:

- A deep technical overview of Nova’s architecture and how it enables advanced multimodal processing;

- Exploration of practical enterprise use cases, including text generation, image recognition, and complex cross-modal interactions;

- A look at Nova’s integration across the AWS ecosystem, including service compatibility and developer tools;

- Discussion of deployment challenges and governance considerations;

- And finally, a forward-looking view into the roadmap for Nova and the future of Amazon’s AI strategy.

Through this analysis, readers—whether developers, executives, or AI strategists—will gain actionable insights into how Nova is positioned to influence the next phase of enterprise AI deployment, and what it means for the evolving relationship between cloud infrastructure, intelligent systems, and real-world impact.

The Nova Architecture: Powering Multimodal Intelligence

At the core of Amazon’s generative AI strategy lies the Nova model family—a sophisticated and versatile architecture engineered to understand and generate information across multiple modalities. Unlike traditional large language models (LLMs) that primarily focus on textual inputs and outputs, Amazon Nova is purpose-built for multimodal processing, encompassing text, images, audio, and video. This design enables Nova to interpret and reason across diverse data types in a unified framework, offering a fundamentally richer and more integrated AI experience.

The Nova architecture represents a technical milestone for Amazon in the field of foundation models. While prior offerings such as Amazon Titan focused on narrow applications—like embeddings and task-specific fine-tuning—Nova was architected from the ground up to support a wide range of cognitive functions across business and consumer contexts. Leveraging advancements in transformer design, model optimization, and data scaling, Nova provides a robust, flexible platform suitable for a spectrum of enterprise use cases, from content creation to intelligent automation.

Unified Multimodal Input Processing

The most distinguishing feature of Nova is its native support for multimodal inputs. In contrast to many models that treat each modality with separate encoders or rely on patched-on subsystems, Nova employs a unified attention mechanism that allows it to process heterogeneous data types in parallel. Whether analyzing a business report embedded with charts, responding to a customer query about a product image, or generating a video description from a transcript, Nova can seamlessly integrate these inputs and deliver contextually relevant responses.

To accomplish this, the architecture incorporates cross-modal embedding layers and modality-specific encoders that are normalized into a shared latent space. This allows the model to establish relational awareness between different forms of data—linking visual cues to textual descriptions, or synchronizing audio narration with video frames. The architecture also includes specialized positional encodings for visual and temporal elements, enabling precise alignment and coherence in complex tasks such as video summarization or multimodal QA.

Scalable Transformer Backbone and Memory Optimizations

At its core, Nova is powered by a high-efficiency transformer backbone that has been scaled to support billions of parameters. The model architecture benefits from innovations such as sparse attention, grouped query attention (GQA), and activation checkpointing to optimize performance during both training and inference. These advancements allow Nova to maintain high throughput and low latency—even when operating across multiple data streams—without sacrificing accuracy or response quality.

Moreover, Nova integrates contextual memory capabilities, enabling extended context windows that support document-level understanding and persistent conversation tracking. This is particularly valuable in enterprise environments where historical queries, workflow continuity, and multi-turn interactions are critical. By maintaining and referencing long-term memory vectors, Nova can perform better in complex decision-making scenarios and customer engagement pipelines.

Instruction-Following and Alignment

One of the key differentiators for Nova lies in its instruction-following proficiency, which has been a significant challenge for multimodal models historically. Amazon has trained Nova on extensive supervised datasets involving human feedback, chain-of-thought reasoning, and role-aligned prompting. The result is a model that not only understands multimodal queries but responds in ways that are aligned with user intent, format expectations, and task objectives.

This capability is further enhanced by alignment layers and safety filters that ensure outputs adhere to enterprise-grade safety, ethical, and compliance standards. Through reinforcement learning from human feedback (RLHF) and continuous fine-tuning, Nova’s responses are optimized for relevance, appropriateness, and legal robustness—making it suitable for use in high-stakes domains such as healthcare, finance, and legal document processing.

Benchmark Performance and Model Comparisons

Nova has been evaluated against leading models across a variety of standardized tasks. These include benchmarks such as:

- VQAv2 (Visual Question Answering)

- COCO (Image Captioning)

- MMLU (Massive Multitask Language Understanding)

- MS MARCO (Cross-modal search relevance)

In these benchmarks, Nova exhibits competitive, and in some cases superior, performance compared to leading proprietary models. For example, Nova’s captioning capabilities match or exceed those of Google’s Gemini 1.5, while its instruction-following accuracy rivals GPT-4 in structured enterprise tasks.

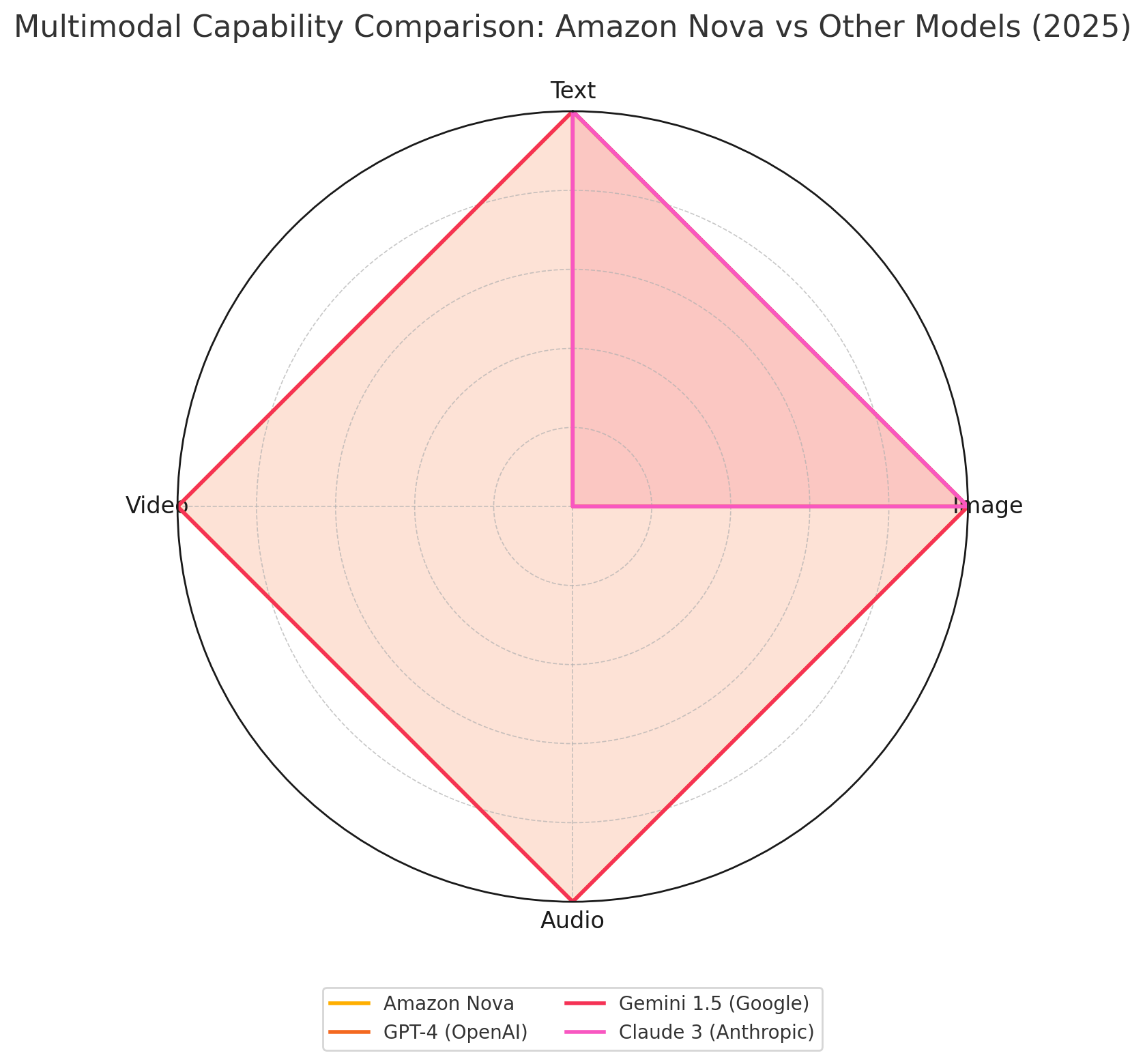

The following chart provides a visual comparison of Nova’s multimodal coverage in relation to its closest competitors:

As the chart illustrates, Nova stands out for its full-spectrum support of all four core modalities—text, image, audio, and video—unlike GPT-4 and Claude 3, which are currently limited to text and image processing. This positions Nova as a more versatile foundation for multimodal enterprise solutions.

Optimization for Real-Time Applications

Another compelling feature of Nova is its optimization for low-latency inference, which is crucial for real-time applications such as voice assistants, fraud detection systems, and autonomous agents. The model’s architecture supports deployment across a range of environments, including Amazon’s edge services and mobile platforms.

Through quantization, dynamic batching, and scalable caching mechanisms, Nova delivers responsive performance even under high load. These capabilities are particularly relevant in customer-facing settings where user experience and interaction speed are paramount.

Adaptability Through Fine-Tuning and Customization

Enterprises that adopt Nova are not limited to out-of-the-box capabilities. The architecture supports both supervised fine-tuning and parameter-efficient adaptation techniques such as LoRA (Low-Rank Adaptation) and prefix tuning. This allows organizations to tailor the model’s behavior for specialized use cases—such as legal clause generation, biomedical image interpretation, or manufacturing anomaly detection—without incurring the high cost of full retraining.

Amazon has also enabled support for retrieval-augmented generation (RAG) workflows within Nova’s inference layer. This empowers enterprises to enhance model outputs using proprietary knowledge bases and documents, while maintaining alignment and traceability.

In summary, the Nova architecture exemplifies Amazon’s commitment to delivering a robust, flexible, and enterprise-ready multimodal AI platform. Through its architectural innovations, performance optimizations, and alignment strategies, Nova offers a compelling alternative to other leading foundation models—especially for organizations operating within the AWS ecosystem.

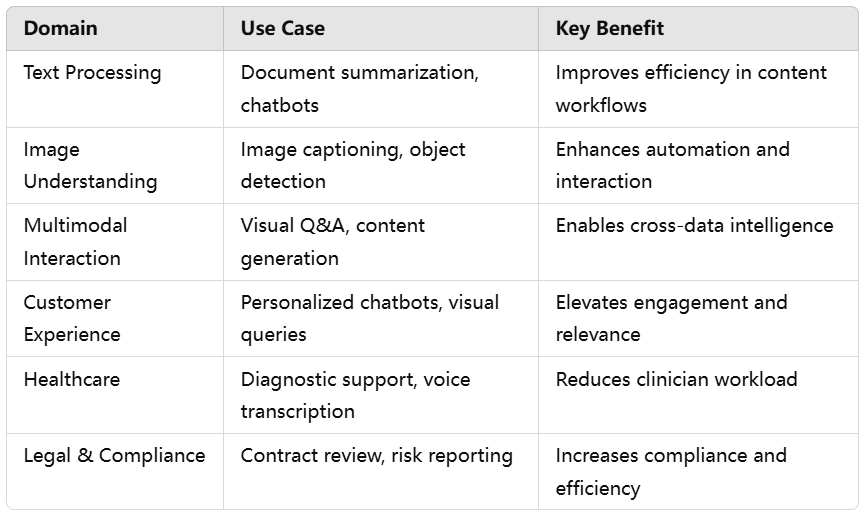

Use Cases Across Text, Vision, and Multimodal Workflows

The true value of a foundation model lies in its capacity to power real-world applications across domains, industries, and user personas. Amazon Nova, with its unified multimodal architecture, offers a broad spectrum of practical use cases that go well beyond conventional language modeling. Designed for both general-purpose deployments and domain-specific tasks, Nova demonstrates exceptional flexibility and efficiency in solving enterprise challenges across text processing, visual understanding, and complex multimodal reasoning.

By enabling seamless processing across multiple modalities—text, image, audio, and video—Nova allows businesses to streamline operations, personalize customer interactions, and unlock new insights from previously underutilized data types. In this section, we explore the most impactful use cases of Nova, categorized by domain and enriched by specific examples and business benefits.

Text Processing: Accelerating Content-Driven Workflows

Text remains the foundational medium for communication, documentation, and digital workflows across every industry. Amazon Nova excels in a range of natural language processing (NLP) tasks, making it a powerful tool for enhancing productivity and automating content-heavy processes.

Key use cases include:

- Document summarization for legal, financial, and academic reports.

- Context-aware text generation, including personalized emails, blogs, and product descriptions.

- Conversational AI, supporting dynamic, multi-turn interactions in customer support or internal knowledge agents.

- Semantic search within enterprise knowledge bases, powered by embedded understanding of user queries and contextual relevance.

These capabilities translate into faster content creation cycles, improved accuracy in documentation, and more responsive digital interactions. For example, an insurance firm can use Nova to automatically summarize claim files, while a financial services provider might implement Nova for automated regulatory report generation.

Image Understanding: Enabling Visual Intelligence at Scale

Visual data has traditionally required specialized systems for interpretation and classification. Nova breaks this barrier by incorporating native support for image inputs, enabling sophisticated vision tasks within a unified model.

Key image-based use cases include:

- Image captioning for accessibility and media asset management.

- Object detection for manufacturing, retail inventory, or autonomous systems.

- Product tagging and categorization for e-commerce optimization.

- Medical imaging support, such as highlighting abnormalities in radiographs or MRI scans.

For example, a healthcare provider can use Nova to assist radiologists by auto-generating descriptive summaries of diagnostic images. In retail, Nova can automate image tagging for thousands of products, improving catalog accuracy and search discoverability.

Multimodal Interaction: Bridging Text, Vision, and Context

Nova’s most innovative capability lies in multimodal interaction, where the model processes and integrates multiple types of input simultaneously. This functionality opens the door to complex applications that require cross-referencing between images, text, and structured data.

Typical multimodal use cases include:

- Visual Q&A, such as answering questions about charts, infographics, or annotated documents.

- Cross-modal search, enabling users to query images with text or retrieve documents based on visual content.

- Marketing content generation, where Nova analyzes visual campaign assets to suggest copy and design variations.

- Intelligent assistants that understand documents embedded with diagrams, multimedia, and interactive elements.

These interactions are especially valuable in executive workflows, enterprise collaboration tools, and digital content design, where users frequently work with compound inputs and require context-rich outputs.

Customer Experience: Enhancing Engagement and Personalization

In customer-facing contexts, Nova enables a new standard of personalization and responsiveness by integrating multimodal understanding into service and engagement platforms.

Applications include:

- Conversational interfaces that support both verbal and visual queries.

- Visual chatbots that can interpret and respond to image-based inputs, such as photos of products or documents.

- Personalized recommendations derived from textual, visual, and behavioral cues.

For example, an e-commerce platform can enable customers to upload an image of a fashion item and receive product suggestions based on visual similarity and contextual cues—like season, occasion, or price range—enhanced by real-time dialogue with an AI assistant.

Healthcare: Streamlining Clinical Workflows and Diagnostics

Nova’s multimodal capabilities are particularly well-suited for healthcare environments, where practitioners must synthesize information from clinical notes, lab results, diagnostic images, and patient histories.

Key use cases include:

- Radiology report generation, where Nova interprets medical scans and drafts clinical summaries.

- Text-image synthesis, such as correlating visual data with EMR narratives.

- Transcription and summarization of physician-patient conversations or clinical dictations.

By automating documentation and augmenting diagnostic workflows, Nova reduces clinician workload and improves the speed and quality of patient care. Importantly, its ability to function within privacy-conscious environments makes it a viable solution for HIPAA-compliant deployments via AWS cloud infrastructure.

Legal and Compliance: Accelerating Risk Analysis and Reporting

Legal departments and compliance teams can leverage Nova to enhance the accuracy and efficiency of document review processes, which often involve dense, multimodal content such as contracts, tables, and regulatory submissions.

Applications include:

- Contract analysis and clause identification.

- Legal content generation, such as non-disclosure agreements or compliance notices.

- Risk reporting, including extraction and visualization of metrics from financial reports or audit documents.

Nova's support for tabular data and embedded visuals makes it uniquely effective in identifying nuanced relationships and highlighting regulatory inconsistencies.

In sum, Amazon Nova’s capacity to operate across modalities translates into versatile applications with measurable enterprise impact. By consolidating traditionally siloed AI functions—text processing, image recognition, audio transcription—into a single model framework, Nova enables organizations to build intelligent systems that are not only more powerful but also more coherent, context-aware, and adaptive.

In the following section, we will explore how Nova is being integrated into the broader AWS ecosystem, enabling seamless deployment, fine-tuning, and governance through tools such as Bedrock, SageMaker, and Lambda.

Enterprise Integration with AWS Ecosystem

One of the defining advantages of Amazon’s Nova AI models is their seamless integration within the broader AWS cloud ecosystem. This integration not only ensures operational scalability and technical compatibility but also aligns with enterprise-grade requirements around security, governance, and developer efficiency. In contrast to standalone AI platforms or open-source deployments that require extensive infrastructure management, Amazon Nova benefits from a native cloud foundation—providing organizations with plug-and-play capabilities that extend across virtually all layers of enterprise IT architecture.

As enterprises increasingly embed generative AI into mission-critical workflows, the ability to deploy, monitor, and fine-tune models within secure and compliant cloud environments becomes a strategic imperative. Nova AI, delivered through services like Amazon Bedrock, Amazon SageMaker, Lambda, and EC2, serves as a model of operational readiness that complements its advanced multimodal capabilities.

Amazon Bedrock: Simplified Access to Foundation Models

At the center of Nova’s integration strategy is Amazon Bedrock, a fully managed service that offers serverless access to a curated portfolio of foundation models—including those from AI21 Labs, Anthropic, Cohere, and Stability AI, alongside Amazon’s own Nova models. This abstraction layer allows developers to interact with Nova through standard API calls without managing infrastructure or worrying about scaling complexities.

Key benefits of Nova via Bedrock include:

- On-demand inference with automatic scaling for fluctuating workloads.

- Security and isolation through integration with IAM roles, VPCs, and encryption controls.

- Pay-as-you-go pricing, allowing fine-grained budget control for prototyping and production scenarios.

- Model evaluation and comparison via the Bedrock playground, enabling side-by-side testing of different models.

Bedrock’s abstraction is especially valuable for enterprise developers who want to embed Gen AI capabilities into applications without needing to become experts in model architecture or deployment logistics.

Amazon SageMaker: Full Lifecycle ML Ops for Nova

For enterprises with more specialized needs—such as fine-tuning Nova models on proprietary datasets or integrating them into machine learning pipelines—Amazon SageMaker provides the necessary depth. As a fully managed ML platform, SageMaker allows teams to train, deploy, and monitor custom AI models using Jupyter notebooks, pipelines, and model registries.

Within the context of Nova, SageMaker enables:

- Fine-tuning and domain adaptation through parameter-efficient techniques such as LoRA or adapters.

- Batch inference for large-scale processing of documents, images, or audio files.

- Model explainability and bias detection, particularly important for regulated industries.

- End-to-end DevOps workflows integrating data preprocessing, training, testing, and deployment.

The ability to combine Nova’s foundational intelligence with enterprise-specific data assets via SageMaker gives organizations a competitive edge in tailoring Gen AI to their verticals.

Integration with Lambda, Step Functions, and Event-Driven Architecture

Nova is also well-suited to real-time, event-driven environments, thanks to tight integration with AWS Lambda, Step Functions, and EventBridge. These services allow enterprises to create responsive systems that trigger Nova in response to specific events—such as customer queries, document uploads, or operational alerts.

For instance:

- A support automation pipeline can use Lambda to invoke Nova when a customer submits an image or message, generate a response, and route it back via chatbot or email.

- A compliance monitoring workflow can call Nova to analyze legal documents uploaded to an S3 bucket, flagging non-compliant clauses or risks.

These integrations enable serverless AI deployments that are scalable, cost-efficient, and aligned with microservice-based architectures.

Security, Governance, and Compliance

Enterprise AI adoption requires stringent controls over data access, model behavior, and compliance. Within the AWS environment, Nova benefits from robust native security features that are essential for trust and risk management.

These include:

- Data encryption at rest and in transit, integrated with AWS KMS (Key Management Service).

- VPC deployment for private network isolation.

- CloudTrail logging for auditable recordkeeping of all model interactions.

- Compliance certifications including ISO, SOC, HIPAA, and FedRAMP, making Nova suitable for healthcare, government, and financial applications.

Additionally, AWS provides access to guardrails for Gen AI, including content filtering, toxicity control, and customizable moderation policies—crucial for public-facing applications and sensitive domains.

Developer Experience and Tooling

Nova's integration within the AWS ecosystem extends to a comprehensive developer experience. This includes:

- SDKs in multiple languages (Python, JavaScript, Java) for API-based model interaction.

- CLI tools and Terraform modules for infrastructure as code deployments.

- SageMaker Studio and Bedrock console UIs for no-code/low-code experimentation and visualization.

For enterprise developers, this environment reduces the learning curve and accelerates time to value. Developers can incorporate Nova into chatbots, internal analytics dashboards, mobile apps, or IoT devices using familiar AWS constructs and deployment patterns.

Cross-Service Synergy and Ecosystem Reach

Nova’s value is amplified by its synergy with other AWS services. For example:

- Amazon Comprehend can be used in tandem with Nova to extract structured entities from generated content.

- Amazon Translate and Transcribe can serve as pre-processors or post-processors for multilingual or audio-centric workflows.

- Amazon QuickSight can present Nova-generated insights in executive dashboards.

This interconnectedness reflects Amazon’s long-standing strategy of creating composable services that scale independently but operate cohesively. By embedding Nova into this ecosystem, AWS ensures that Gen AI becomes a native feature across the enterprise stack rather than a bolted-on tool.

In conclusion, Amazon Nova’s tight alignment with AWS services makes it an attractive proposition for enterprises seeking operational scalability, regulatory compliance, and developer convenience in deploying Gen AI. Whether accessed via Bedrock for ease of use or SageMaker for custom development, Nova offers flexible pathways for realizing the full potential of multimodal artificial intelligence within real-world enterprise contexts.

Challenges and Considerations for Nova AI Deployment

While Amazon Nova presents a powerful and compelling foundation model suite capable of addressing complex multimodal enterprise needs, its deployment at scale comes with a range of strategic, operational, and ethical considerations. As with any advanced generative AI system, successful implementation of Nova requires more than just access to the model—it demands clear foresight, robust infrastructure, and a governance framework that aligns with enterprise risk tolerance, compliance obligations, and workforce capabilities.

In this section, we explore the primary challenges organizations face when deploying Nova AI across different operational environments. These include cost management, model customization, workforce enablement, technical integration, and responsible AI governance.

Cost of Inference and Resource Optimization

One of the most immediate considerations for enterprises adopting Nova is the cost associated with inference at scale. Multimodal foundation models are resource-intensive by design, particularly when tasked with processing high-resolution images, long-context textual data, or synchronized video and audio streams. As Nova supports real-time and batch processing across these modalities, the associated GPU consumption and memory demands can quickly escalate.

For organizations deploying Nova via Amazon Bedrock, costs may accrue based on token usage, input-output length, and concurrency requirements. Meanwhile, those utilizing SageMaker for fine-tuning or high-throughput applications must consider EC2 instance costs, data transfer fees, and storage utilization.

To address these challenges, enterprises are advised to:

- Leverage parameter-efficient tuning techniques (e.g., LoRA) instead of full model retraining.

- Optimize for model distillation and quantization in low-latency use cases.

- Employ batch processing and asynchronous workflows to reduce idle compute overhead.

Failure to align infrastructure cost optimization with model usage patterns can limit Nova’s long-term feasibility, particularly for startups and cost-sensitive verticals.

Model Customization and Domain-Specific Adaptation

While Nova offers general-purpose intelligence across multiple domains, many enterprises require models that are contextualized to specific industry knowledge, customer language, or proprietary workflows. Out-of-the-box performance may not be sufficient for applications involving niche legal terminology, biomedical imaging, or internal product taxonomies.

Amazon provides mechanisms for customization, including:

- Fine-tuning through SageMaker JumpStart, allowing training on proprietary data.

- Retrieval-Augmented Generation (RAG) pipelines that inject enterprise knowledge into responses.

- Prompt engineering techniques and system-level instructions for non-invasive behavior shaping.

However, effective customization introduces its own challenges:

- Requires annotated datasets and domain expertise.

- Increases risk of model overfitting or performance drift.

- Demands robust evaluation frameworks to validate outputs post-customization.

Without careful planning, attempts to specialize Nova may reduce performance consistency or introduce compliance risks due to misalignment with ground truth data.

Integration with Legacy Systems and Data Silos

Integrating Nova into existing IT environments is another critical challenge—particularly in enterprises with legacy architectures, fragmented data pipelines, or heterogeneous technology stacks. For Nova to deliver maximum value, it must interact fluidly with upstream data repositories and downstream decision systems.

Common integration hurdles include:

- Data normalization and formatting across modalities.

- Lack of APIs or middleware to bridge on-premises systems with cloud-deployed Nova endpoints.

- Insufficient support for low-latency inference in embedded or edge environments.

To overcome these constraints, organizations may require investment in:

- ETL modernization to support real-time and multimodal data ingestion.

- Deployment of event-driven architectures using Lambda and EventBridge.

- Use of containerized microservices to modularize Nova-powered workflows.

Without architectural modernization, Nova may remain underutilized, confined to isolated pilots rather than organization-wide transformation.

Workforce Readiness and Skill Gaps

Advanced AI deployment is not solely a technical challenge—it is also a human capital challenge. The introduction of Nova into enterprise workflows necessitates new skill sets across roles, from IT and product development to legal and customer service.

Key capability gaps often include:

- Prompt engineering for maximizing model utility and output quality.

- Multimodal data design, requiring fluency in aligning structured, visual, and unstructured content.

- AI governance and auditability, including understanding model behavior and flagging anomalous responses.

To close these gaps, enterprises must invest in:

- Internal training programs focused on Gen AI applications and best practices.

- Cross-functional AI enablement teams to support business units in adoption.

- Hiring or upskilling for emerging roles such as AI product managers, AI ethicists, and model validators.

Failure to build internal expertise can lead to poor ROI, ethical missteps, or lack of trust in Nova-driven processes.

Responsible AI and Regulatory Compliance

Perhaps the most pressing consideration in deploying Nova is ensuring that its outputs align with ethical principles, data protection laws, and industry-specific regulations. As a powerful generative system capable of creating textual summaries, visual interpretations, and predictive content, Nova raises serious questions regarding misinformation, bias, privacy, and liability.

Key regulatory and governance challenges include:

- Compliance with GDPR, HIPAA, AI Act, and other regional mandates.

- Avoidance of toxic content generation, particularly in customer-facing applications.

- Ensuring data provenance and output traceability for audit readiness.

Amazon provides several safeguards—including content moderation APIs, logging mechanisms, and encryption defaults—but ultimate responsibility rests with the enterprise.

Responsible deployment of Nova requires:

- Defining acceptable use policies tailored to the organization.

- Implementing human-in-the-loop review processes for sensitive tasks.

- Using bias detection and explainability tools to monitor model behavior over time.

Organizations failing to address these issues may face reputational harm, legal exposure, and diminished trust from customers and regulators.

The deployment of Amazon Nova within enterprise environments offers transformational potential—but only when accompanied by careful planning, technical integration, organizational readiness, and ethical foresight. From infrastructure costs and domain alignment to governance frameworks and user training, each layer of adoption must be treated with the same rigor as traditional enterprise systems.

The Future of Nova AI and Multimodal Generative Intelligence

As of 2025, Amazon’s Nova AI models have solidified their position as powerful, enterprise-grade tools capable of bridging the gap between natural language understanding, computer vision, and multimodal reasoning. However, the pace of innovation in the AI sector is relentless, and the Nova model family—while highly capable today—represents only the beginning of a broader evolution in intelligent systems. In this final section, we examine the trajectory of Nova AI, the anticipated advancements in generative intelligence, and the strategic implications for enterprises, developers, and the global AI ecosystem.

Toward More Capable and Contextual Models

The next phase in the development of the Nova model family will likely focus on increasing contextual awareness, reasoning depth, and modality interactivity. While current Nova models are capable of ingesting and processing multiple data types, the evolution of these capabilities will center on enabling richer cognitive tasks, such as:

- Cross-modal reasoning over long sequences (e.g., analyzing video, transcript, and related documents simultaneously).

- Advanced planning and decision-making using agent-based frameworks.

- Persistent memory across interactions, sessions, and historical user data.

These capabilities are expected to emerge through architectural enhancements such as Mixture-of-Experts (MoE) layers, hierarchical attention mechanisms, and continuous learning loops that enable the model to refine its understanding over time. In doing so, Nova will move beyond static inference to become an adaptive system capable of context-driven personalization and intelligent task orchestration.

Introduction of Nova 2 and Expanded Modalities

According to industry analysts and AWS roadmap previews, a forthcoming Nova 2 model suite is expected to introduce several key enhancements:

- Native audio and speech generation, allowing Nova to serve as both an understanding and voice output engine.

- Expanded video analysis capabilities, with frame-level granularity and temporal understanding suitable for surveillance, entertainment, and industrial safety applications.

- Integration of 3D spatial reasoning for use cases in robotics, augmented reality, and manufacturing design.

These advancements will place Nova in direct competition with next-generation releases from OpenAI, Google DeepMind, and Meta. More importantly, they will extend Nova’s applicability into domains that have traditionally relied on siloed AI tools, offering a unified platform for end-to-end multimodal interaction.

The anticipated release of Nova 2 will also emphasize fine-tunability, giving enterprises greater control over behavior alignment, tone, and knowledge integration—features critical to customer experience, brand identity, and regulatory alignment.

Deployment in Edge, Devices, and Consumer Platforms

Looking beyond cloud deployment, Amazon is well-positioned to bring Nova into edge environments and consumer-grade devices. This represents a strategic frontier that will differentiate Nova from other foundation models that remain primarily cloud-bound.

Potential applications include:

- Alexa integration, transforming voice assistants into contextually aware, multimodal agents that understand and generate personalized responses.

- Amazon Echo devices enhanced with visual recognition and cross-device interaction, enabling more natural household automation.

- Fire TV and Kindle ecosystems, augmented with interactive content summarization, scene analysis, and user-guided content navigation.

In logistics and industrial contexts, Nova could be embedded in:

- Warehouse robotics, where multimodal models interpret sensor data, route optimization maps, and voice commands.

- Smart retail kiosks, providing personalized assistance based on visual identification and user queries.

- Last-mile delivery systems, using real-time video and GPS data for dynamic routing and anomaly detection.

By deploying Nova across the edge-device continuum, Amazon has the opportunity to establish a pervasive AI layer that interacts with users not only in the cloud but throughout their physical environments.

Integration with Autonomous Agents and Workflow Automation

As enterprise interest grows in autonomous agents and AI copilots, Nova is well-positioned to become the core engine behind these orchestrated systems. The convergence of Gen AI with automation platforms will enable:

- Self-operating enterprise agents that manage documents, emails, and reports based on high-level goals.

- Customer support agents that engage in long-term, memory-based dialogues across channels.

- Intelligent operations assistants capable of controlling dashboards, invoking APIs, and generating executive insights from real-time data.

Amazon’s expanding support for agentic frameworks via Bedrock and Step Functions indicates a roadmap in which Nova will no longer serve as just a response generator—but as an active decision-maker and orchestrator embedded into enterprise operations.

This will be particularly impactful in regulated industries where audit trails, human oversight, and deterministic decision rules can be combined with Nova’s generative fluency to create safe, explainable, and adaptive intelligent systems.

Strategic Positioning in the Global AI Ecosystem

From a competitive standpoint, Amazon’s strategic play with Nova is twofold: to reclaim leadership in foundational AI and to solidify AWS’s dominance as the premier platform for Gen AI deployment. While OpenAI and Microsoft have taken early mindshare with GPT-based integrations, Amazon holds a long-term advantage in infrastructure reliability, enterprise compliance, and global reach.

Nova allows AWS to:

- Offer a fully integrated Gen AI stack that aligns with enterprise IT standards.

- Support multi-model strategy, where Nova coexists with third-party models in a single orchestration layer.

- Deliver a developer-first experience, with open SDKs, serverless options, and customizable safety layers.

Moreover, Nova gives Amazon leverage in cross-selling AI-native experiences across its B2B and B2C platforms—from retail search and logistics forecasting to personalized media and smart home devices.

As more governments and institutions place constraints on where and how AI models are trained, deployed, and monitored, Amazon’s global compliance footprint and VPC-ready infrastructure will likely make Nova a preferred option for sovereign AI strategies in Europe, Asia-Pacific, and the Middle East.

Nova as a Catalyst for Intelligent Transformation

Amazon Nova represents a critical inflection point in the evolution of multimodal AI—from experimental models to enterprise-critical platforms. Its scalability, adaptability, and integration capabilities position it not only as a foundation model but as a foundational enabler of enterprise transformation, consumer experiences, and autonomous systems.

As Nova continues to evolve, enterprises must remain strategically agile. Those that treat Nova not merely as a tool but as an architectural pillar will be best equipped to capture the value of intelligent automation, real-time personalization, and cross-modal insight extraction.

In the years ahead, Nova’s success will be measured not just by its benchmarks, but by the ecosystems it powers, the problems it solves, and the opportunities it creates—across every industry, device, and dimension of human-machine interaction.

References

- Foundation Models on AWS

https://aws.amazon.com/bedrock/ - Build, Train, and Deploy Machine Learning Models

https://aws.amazon.com/sagemaker/ - Nova and Generative AI

https://aws.amazon.com/blogs/machine-learning/ - Foundation Model Research and Innovation

https://www.amazon.science/ - GPT-4 Technical Overview

https://openai.com/research/gpt-4 - Gemini Multimodal AI

https://cloud.google.com/generative-ai - Claude Model Capabilities

https://www.anthropic.com/product - Foundation Model Hub

https://huggingface.co/models - Microsoft Azure OpenAI Service

https://azure.microsoft.com/en-us/products/cognitive-services/openai-service/ - Generative AI Use Cases in Enterprise

https://www2.deloitte.com/us/en/insights/focus/cognitive-technologies/generative-ai-in-business.html